The AI Spam Flood: Analyzing the Industrialization of Digital Deception and the Race for Authenticity

The release of highly capable large language models (LLMs) has democratized content creation, but this power comes with a severe shadow: the industrial-scale creation of low-quality, algorithmically generated "spam." Recent reports indicating that over 3,000 distinct "AI content farms" have already been flagged by watchdog systems like NewsGuard and Pangram Labs serve as a stark warning. This is not merely a nuisance; it represents the rapid industrialization of digital deception.

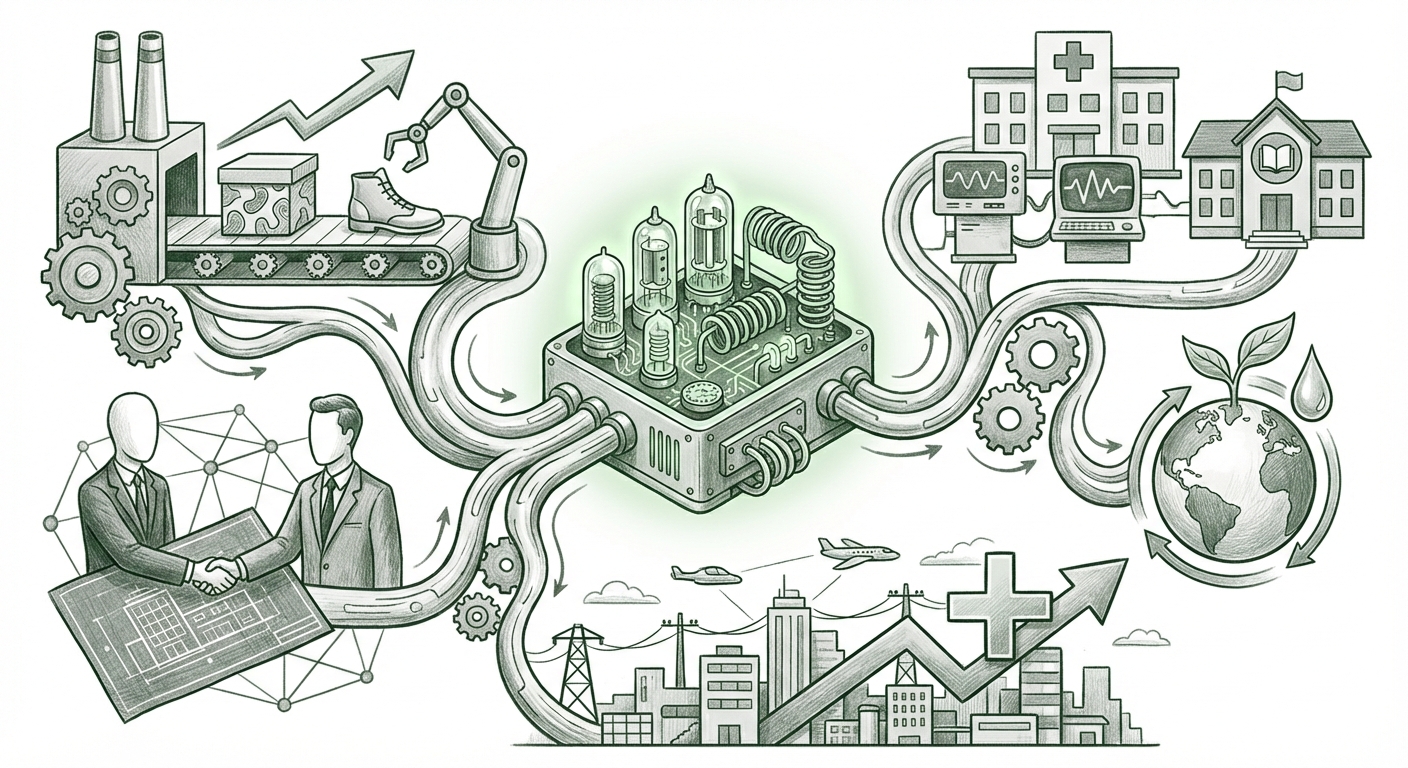

As an AI technology analyst, my focus shifts from the potential of AI to its systemic risks. The current situation demands a comprehensive understanding of the economic drivers, the technological arms race, and the essential countermeasures required to preserve the integrity of the public information space. This article synthesizes current trends to outline what this massive surge means for the future of AI, business, and society.

The Scale of the Threat: From Hobbyist to Factory Floor

The critical piece of data is the sheer volume: 3,000 flagged sites, with hundreds more appearing monthly. This signifies a transition from experimental misuse to an organized, replicable business model. These farms are not typically interested in nuanced, factual reporting; their goal is mass content production designed solely to capture fragmented attention and revenue.

1. The Economic Engine: Why Content Farms Thrive

The primary driver behind this explosion is simple economics. AI tools slash the cost of content production to near zero while radically increasing output. We must look deeper into the monetization model to understand why this trend is accelerating.

The goal for these operators is often rooted in programmatic advertising and affiliate marketing, strategies that thrive on high traffic volume regardless of content quality. Analyzing the monetization angle (as prompted by our research focus on AI content farm scale and monetization) reveals that these sites are designed to game the system:

- Programmatic Ads: By filling thousands of pages with keyword-stuffed, slightly varied content, operators aim to serve millions of low-value ad impressions. The volume compensates for the low payout per click.

- Affiliate Link Hijacking: These sites often create "review" pages or "best of" lists, stuffing them with AI-generated summaries and recommendations, all leading to affiliate purchase links. The speed of generation allows them to target lucrative, high-intent commercial keywords instantly.

For business and media investors, this means the digital advertising ecosystem is experiencing an "AdTech vacuum"—a flood of low-quality inventory driving down overall platform quality and making it harder for legitimate publishers to maintain fair value for their ad space. This is a key indicator that the current economic incentive structure favors digital pollution.

2. The Platform Defense: The Algorithm Arms Race

If the supply of spam content is infinite, the only solution is efficient filtering. The battleground is now firmly fixed on major search engines, primarily Google, and social media platforms.

Search engines are rapidly evolving their defenses, most notably through updates aimed at prioritizing authentic human experience over sheer content volume. When investigating the impact of Google algorithm updates on AI spam, we see a clear pivot. Google's algorithms are moving beyond simply detecting text patterns; they are looking for signals of genuine expertise, first-hand review, and authoritativeness—concepts that are difficult for current LLMs to mimic convincingly without human oversight.

This leads to an arms race. As soon as a platform rolls out a countermeasure (e.g., penalizing thin content), the spam operators fine-tune their generation scripts to bypass the new heuristic. The speed at which they iterate is alarming, forcing platforms into continuous, reactive updates rather than static enforcement policies.

The Technological Undercurrent: Detection, Provenance, and Watermarking

The flagging of 3,000 sites confirms that detection technology is advancing alongside generation technology. The work being done by organizations like NewsGuard and Pangram Labs moves beyond simple readability tests; it delves into the core mathematics of synthetic text.

3. Decoding Deception: Advanced Detection Methods

To truly understand the future implications, we must examine the technology underpinning the defenses. This involves looking into advanced methods for detecting synthetic text, often referred to in cybersecurity and research circles as provenance tracking.

Our investigation into detection methods for synthetic text and AI watermarking shows that researchers are focused on two main areas:

- Statistical Fingerprinting: LLMs, despite their fluency, often exhibit statistical patterns, word choices, and predictable entropy levels that differ slightly from human writing. Detectors look for these subtle mathematical fingerprints.

- Cryptographic Watermarking: This is the 'holy grail' for provenance. It involves embedding an invisible, mathematical signal directly into the output of the model when the content is generated. If the content is AI-created, the watermark should be recoverable. However, this requires cooperation from the LLM developers (OpenAI, Google, Anthropic), and spammers often use open-source or self-hosted models that lack this feature.

The challenge here is that any effective detection watermark can eventually be reversed, obscured, or bypassed by "human polishing" or using models specifically trained to strip out such signals. The future integrity of the web rests on making content provenance—proving where content *came from*—a more fundamental layer of the internet infrastructure.

Future Implications: A Fork in the Road for AI Development

The flood of AI spam is more than a content issue; it is a fundamental stress test for the entire digital information ecosystem. Where we go from here depends on the response across governance, technology, and business practices.

The Corrosion of Trust and the Misinformation Vector

The broader context, summarized in research concerning the surge of low-quality content and misinformation, paints a worrying picture. When the internet is saturated with content that is technically fluent but factually unreliable or contextually shallow, the signal-to-noise ratio collapses. Users become exhausted, leading to information fatigue and, critically, a generalized distrust in *all* online sources, including verified news outlets.

For AI, this is an existential threat. If the primary use case demonstrated by this technology explosion is optimized deception and content flooding, public and regulatory backlash will inevitably slow the responsible adoption of beneficial AI technologies. The technology itself risks being labeled fundamentally untrustworthy.

Actionable Insights for Businesses and Developers

This industrialization of spam requires strategic shifts from legitimate actors:

- Redefining SEO: Search Engine Optimization (SEO) is dying in its traditional form. The future demands Search Engine Experience Optimization (SEXO). Success will rely on demonstrating real-world connection, personal authority, and unique data that AI cannot easily fabricate.

- Investment in Provenance Tools: Businesses relying on digital content must integrate content verification tools, just as they integrate cybersecurity. Trust in third-party content suppliers must be established through verifiable provenance chains, not just reputation.

- The AdTech Reckoning: Advertisers must demand greater transparency and quality guarantees from their programmatic partners. If ad spend continues to fund the spam ecosystem, the entire digital advertising industry faces reputational collapse. Quality-over-quantity metrics must become the standard.

- Focus on Vertical AI: The generic LLM spam strategy will continue until it becomes unprofitable. The next wave of value will come from highly specialized, fact-checked, and domain-specific AI tools that offer unique, non-replicable insights—the opposite of the generic content farm.

Conclusion: Choosing the Future of Digital Information

The recent flagging of thousands of AI spam sites is a definitive milestone. It proves that generative AI is not just capable of creating content but is capable of creating digital infrastructure built on deception for the sole purpose of exploiting attention economics. This is the messy adolescence of the generative AI era.

The race is on between the speed of automated content generation and the sophistication of automated detection and verification systems. For AI to mature into a tool that genuinely enhances human knowledge and productivity, the industry—developers, regulators, and platform owners alike—must prioritize the integrity of the information layer over the short-term gains offered by mass-produced synthetic content. The future of AI is not just about what it can create, but what we choose to allow it to pollute.