The Context Revolution: Why Anthropic Just Made Million-Token AI Economically Viable

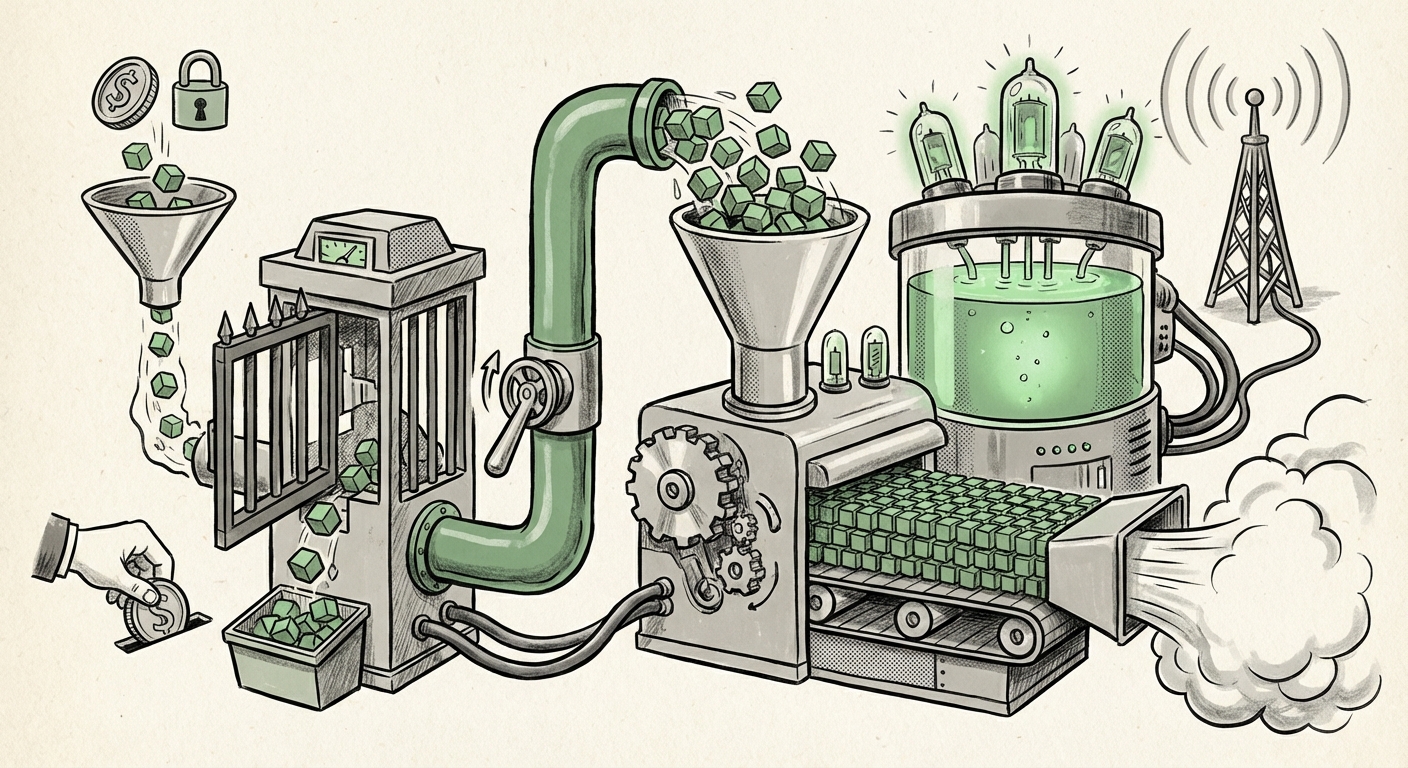

The race for Artificial Intelligence supremacy isn't just about raw intelligence anymore; it’s about economics and accessibility. A recent, pivotal development from Anthropic—the complete removal of the context window surcharge for their flagship models, Opus 4.6 and Sonnet 4.6—signals more than just a minor price adjustment. It marks a significant inflection point where the cost barrier to handling truly massive datasets has collapsed, ushering in a new era of deep, contextual AI application.

As an AI technology analyst, I view this as one of the most important commercial shifts of the year. When models like Claude 4.6 can process millions of tokens (the equivalent of entire large novels or vast software repositories) without charging double the standard rate, the fundamental math of enterprise AI deployment changes overnight. This move forces every major player, and every company building with LLMs, to fundamentally re-evaluate their strategy.

Deconstructing the Shift: From Luxury Feature to Commodity Capability

For months, the ability to handle extremely long context windows—200,000 tokens, 500,000, or even 1 million tokens—was positioned as a premium feature. It carried a hefty surcharge, sometimes doubling the cost per token for input. This was logical: processing context scales non-linearly with the size of the input, demanding significantly more memory and compute power during inference. It was a feature reserved for the most critical, high-budget tasks.

Anthropic’s decision to drop this surcharge for Opus 4.6 and Sonnet 4.6 fundamentally changes this dynamic. It suggests one of two things, likely a combination of both:

- Technical Efficiency Breakthrough: Anthropic has achieved a major internal breakthrough in inference optimization, meaning the actual cost (in GPU time and memory) to process a million-token context has dropped dramatically. This aligns with discussions around advancements in attention mechanisms and memory management within cutting-edge LLM research.

- Aggressive Market Strategy: Anthropic is deliberately using pricing to capture market share and drive adoption for use cases previously deemed too expensive. They are effectively treating massive context as a feature that needs to be *standardized* rather than *premiumized*.

This immediate affordability democratizes power. It means tasks that required complex, layered data retrieval systems can now often be accomplished simply by pasting the necessary information directly into the prompt. For context, a million tokens can hold roughly 750,000 words—enough to contain the entirety of *War and Peace* multiple times, or the entire documentation set for a medium-sized enterprise software suite.

The Context Wars Escalate: Competitive Dynamics

This action directly heats up the ongoing "Context Wars" between the major LLM providers. To understand the gravity of Anthropic’s move, we must look at the competitive landscape, which often dictates the pace of innovation in this sector.

Benchmarking Against the Titans

When a capability becomes functionally commoditized by one vendor, competitors must react. We need to examine how this stacks up against rivals. An essential next step in analysis involves comparing this move against the current pricing tiers of OpenAI and Google. For instance, analysis comparing current API pricing structures specifically around context length tiers (querying "LLM context window pricing comparison 2024") quickly reveals the pressure points.

If rivals maintain their surcharges, Anthropic instantly gains a massive cost advantage for any application that involves summarizing large documents, comparing numerous legal filings, or synthesizing vast amounts of internal chat data. This drives developers building on a budget directly toward Claude 4.6.

The Competitive Response

The next crucial piece of the puzzle is observing the response from OpenAI (with models like GPT-4o) and Google. We need targeted analysis of "OpenAI GPT-4o context window pricing strategy". Will they swiftly match the price parity to retain high-volume, high-context users? Or will they rely on perceived performance advantages in other areas to justify a continued premium? This competitive pressure is what ultimately benefits end-users, as innovation driven by market share battles leads to cheaper, more powerful tools for everyone.

Technical Underpinnings: How Do They Make It Cheaper?

For the engineering audience—the ML researchers and infrastructure architects—the core question is *how* this efficiency was achieved. The simple answer is that the computational complexity of the self-attention mechanism, the heart of the Transformer architecture, grows quadratically with the input length ($O(n^2)$). Doubling the context length quadruples the compute needed for attention calculations.

To drive costs down, providers must employ novel solutions. Searching for "Technical advancements enabling cheaper long context LLMs" often yields insight into techniques such as:

- FlashAttention and Optimized Kernels: Software improvements that make the attention calculation far more efficient on specific hardware (like GPUs).

- Windowing and Sparse Attention: Techniques where the model doesn't look at *every* token relationship, but intelligently selects the most relevant ones, reducing the quadratic load.

- Optimized Memory Management: Innovations in how key-value (KV) caches are stored during generation, minimizing memory bottlenecks inherent in very long sequences.

If Anthropic has truly mastered these optimizations to the point where million-token input only costs marginally more than 100k input, this implies a fundamental leap in LLM serving efficiency that could cascade across the entire industry.

Transforming Application Architecture: The RAG Paradigm Shift

Perhaps the most profound implication lies in how we build applications on top of these models, particularly Retrieval-Augmented Generation (RAG).

Traditionally, RAG systems are complex layers designed to fight the context limitation. If an application needs 50 proprietary documents to answer a question, the RAG system must intelligently select the top 5 most relevant snippets, compress them, and feed them to the model. This process is error-prone, relies heavily on the quality of the embedding model, and often suffers from "lost in the middle" syndrome, where critical information gets buried.

With million-token context windows available affordably, developers can shift their strategy. Instead of complex retrieval, they can employ Context Dumping or Holistic Ingestion. We must investigate the "Impact of long context on RAG architecture performance". This shift suggests:

- Simplified RAG: For many internal knowledge bases, developers can bypass complex chunking and retrieval entirely, simply injecting entire code repositories, full annual reports, or a year's worth of meeting transcripts into the prompt.

- Reduced Hallucination Risk: When the model has access to the entire source document, its ability to fabricate facts decreases, as it can directly cross-reference against the full provided context.

The trade-off is subtle but important: while complex RAG might still be necessary for tasks requiring reasoning over *billions* of documents (requiring database indexing), for tasks involving hundreds of related documents, the affordable, massive context window becomes the dominant, simpler solution.

Enterprise Adoption: From Pilot to Production

For business leaders and product managers, this is a green light for adoption in areas previously deemed too risky due to cost uncertainty.

Industry reports detailing "Enterprise adoption of 1M token context windows use cases" highlight several critical areas ripe for disruption:

- LegalTech: Analyzing multi-volume litigation discovery documents, contract comparison across hundreds of contracts simultaneously, and summarizing entire case histories in one pass.

- Software Engineering: Providing an LLM with an entire application's codebase, configuration files, and dependencies to debug complex, cross-file issues or automatically generate documentation for legacy systems.

- Financial Services: Ingesting years of quarterly earnings reports, analyst calls, and regulatory filings to generate comprehensive risk assessments or investment theses quickly.

When cost uncertainty is removed, the Return on Investment (ROI) calculations become far clearer. If an AI agent can complete a week's worth of paralegal review in an hour at a predictable, low cost, the path to integrating that technology into core workflows becomes immediate.

Future Implications: The Context Ceiling Rises

What does this mean for the future? The ceiling on what LLMs can conceptually "remember" and process in a single interaction has been raised dramatically. This opens up two exciting frontiers:

The Rise of the "Persistent Agent"

If models can hold millions of tokens, they can potentially maintain context across longer, multi-step interactions without losing essential background information. Imagine an AI consultant that remembers every detail of your company’s history, every previous decision, and every constraint discussed over a three-day engagement, all held within the working memory of the current session.

The Data Ingestion Explosion

We are moving toward an environment where uploading data is less about fitting it into a tight slot and more about providing the *entirety* of the relevant universe. This democratizes access to high-level reasoning for smaller organizations that previously could not afford the infrastructure or complex chunking necessary to make their small data pools useful to older, smaller-context models.

Actionable Insights for Today's AI Leaders

This development requires immediate strategic review across technical and business units:

- Re-evaluate Cost Models: CTOs and Finance departments must immediately revise API spend forecasts. Assume long context input is now the baseline cost for complex tasks, not an outlier expense.

- Audit RAG Systems: For existing applications, engineering teams should test feasibility studies: Can we replace our complex RAG retrieval logic with a direct, million-token context input for 80% of use cases? If so, simplify the architecture now.

- Prioritize Data Consolidation: Businesses should focus on making their essential knowledge bases (documentation, reports, codebases) machine-readable and centrally available. The easier it is to dump the data into Claude, the faster the AI value is realized.

- Watch the Competitive Landscape: Monitor how competitors price their long-context tiers over the next 30 days. This is a key indicator of who is leading the infrastructure race.

Anthropic's decision is a clear signal: the era of constrained AI interaction is fading. The models are ready to read entire libraries in one go. The challenge now shifts from *can the AI read it?* to *are we giving the AI the right information to read?* This is where human expertise—curation, governance, and strategic intent—becomes more valuable than ever before.