The Great AI Unbundling: Why ChatGPT Leads But User Loyalty Is Dead in the New LLM Economy

The initial explosion of Generative AI was defined by a single, monolithic leader: ChatGPT. It was the gateway drug to large language models (LLMs) for the world. However, recent market analyses, such as those ranking the top 100 consumer AI products, reveal a profound shift. The market is no longer just about *if* you use AI, but *which* AI you use for a specific task. ChatGPT may still hold the crown in broad visibility, but user loyalty is evaporating. This signals a critical inflection point: we are moving rapidly from the era of novelty to the era of granular utility.

As an AI technology analyst, this trend doesn't suggest a decline in AI usage; quite the opposite. It indicates a maturing landscape where consumers and, crucially, enterprises, are becoming sophisticated shoppers, prioritizing performance, cost, and regulatory fit over mere brand recognition. To truly understand the future of AI adoption, we must examine the forces driving this fragmentation and specialization.

The Maturing Market: From Novelty to Necessary Utility

When ChatGPT first launched, it provided a "wow factor" that masked underlying limitations. Today, similar capabilities are becoming table stakes. If one model can write an email, its competitor must do the same, and likely do it cheaper or faster. This convergence of basic competence leads directly to user churn.

Our analysis, corroborated by looking at developer surveys and market reports, suggests that users are now engaging in "multi-model usage." They aren't switching entirely; they are building an AI toolkit.

- Task Specialization: A user might use one LLM for initial code drafting because it excels there, switch to another for summarizing dense legal documents due to better long-context handling, and rely on a third, cheaper model for simple, high-volume content moderation.

- Feature Parity and Pricing Wars: As competitors rapidly clone leading features, the primary differentiator shifts from *what* the model can do to *how much* it costs or *how ethically* it was trained. This forces providers into aggressive pricing strategies, further eroding the reason to commit to one vendor.

This "shopping around" is the hallmark of a maturing market. Just as consumers don't rely on a single software application for every digital need, they won't rely on a single LLM for every cognitive task. The market is unbundling the perceived monopoly of the general-purpose chatbot.

The Technical Driver: The Rise of Specialized AI and RAG

Why are developers and power users becoming such savvy shoppers? Because the technology itself is evolving away from needing the single largest, most expensive model for everything. This shift is technically driven:

The future isn't just bigger models; it’s smarter, smaller, and more context-aware implementations. The increasing adoption of Retrieval-Augmented Generation (RAG) architecture is central to this. RAG systems allow developers to connect powerful, yet general, LLMs to proprietary, specific enterprise data. In this scenario, the model provider becomes less important than the quality of the proprietary data connector.

When developers are asked about their preferences, surveys often show a tilt toward open-source or smaller, highly customizable models that integrate well with existing infrastructure, especially when paired with a solid RAG pipeline. If a task can be solved efficiently by a smaller, fine-tuned model (SLM) connected to internal documents, paying the premium for the largest, proprietary API becomes unjustifiable—especially if a competitor offers similar fine-tuning services at a lower rate.

Actionable Insight for Developers and Tech Leads:

Focus less on betting on one "winner" LLM API and more on building flexible abstraction layers. Your application layer should be able to swap out the underlying LLM engine with minimal friction, allowing you to pivot immediately based on price, context window size, or specialized training.

Geopolitical Fragmentation: AI Sovereignty and Regional Champions

The second major trend disrupting singular platform dominance is geopolitical fracture. AI development is increasingly becoming a matter of national and regional strategy, moving beyond simple commercial competition.

The global AI race is not just being run by Silicon Valley; it’s being contested across borders due to concerns over data sovereignty, regulatory compliance (like the EU AI Act), and linguistic nuance. This leads to mandated or preferred use of domestic technology.

For instance, in Europe, there is a strong push to support and deploy models from companies like Mistral AI. These European champions are often lauded not just for performance, but for their alignment with stricter European privacy standards and their open-source commitments, which appeal deeply to enterprise customers wary of deep dependence on US hyperscalers. Similarly, in Asia, local models are gaining ground by offering superior performance in local languages and adhering to national data governance policies. This fracturing means that "global usage" is becoming a misnomer; we are seeing parallel, segmented markets.

Implication for Global Business Strategy:

Companies operating internationally can no longer rely on a single, US-centric AI vendor for all regions. Deployment strategies must account for regional "preferred stacks" to ensure smooth compliance and better local performance. The AI architecture must be globally aware but locally deployed.

The Economic Reality: Subscription Fatigue and the Value Proposition

Ultimately, user loyalty hinges on perceived value relative to cost. As more applications integrate AI features—from word processors to customer service bots—the average user begins to feel "subscription fatigue." Why pay $20 a month for AI Suite A if AI Suite B now has 90% of the same features for free or included in another essential subscription?

This economic pressure validates the "shopping around" behavior. Enterprises, too, are scrutinizing API token costs more closely. They are calculating the exact ROI for each model deployed. If Model X provides a 5% increase in quality but costs 50% more than Model Y, the business case for Model X collapses.

This competitive environment forces vendors into a difficult balancing act: continuous, expensive R&D to maintain a feature lead while simultaneously lowering prices to compete with open-source alternatives.

What This Means for the Future of AI Adoption

The period where one company could dominate the entire AI workflow is ending. The future is characterized by a highly competitive, dynamic ecosystem, which is excellent news for innovation and end-users, but presents new challenges for long-term vendor commitment.

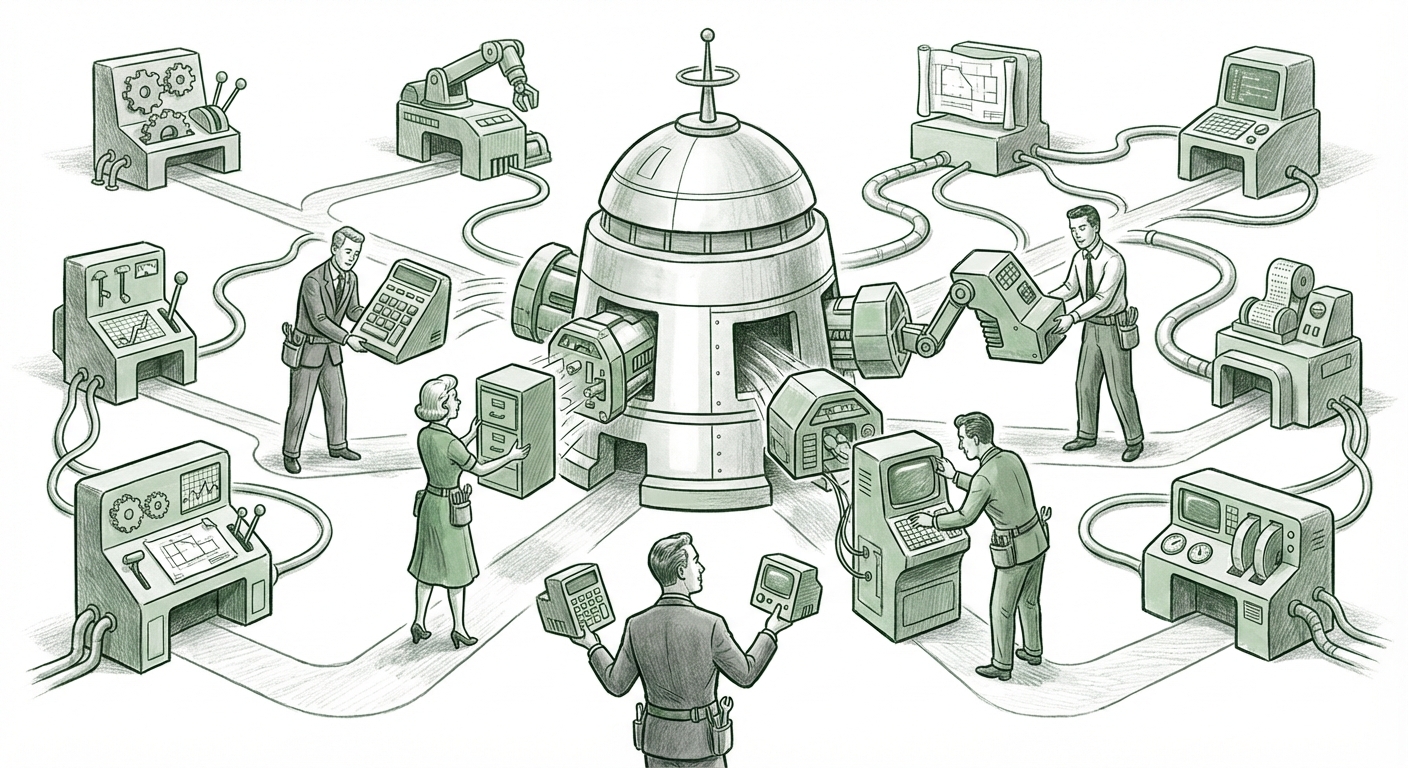

1. The Rise of the AI Orchestrator Layer

The most valuable companies in the next phase of AI adoption will be those that build the *orchestration* layer—the intelligent middleware that manages which LLM, vector database, and fine-tuning service gets called for any given request. These platforms will be model-agnostic, prioritizing efficiency and outcome delivery over vendor allegiance.

2. The Importance of Context Over Raw Intelligence

As models become roughly equivalent in general intelligence, the competitive edge shifts to context. Future platforms will win based on their ability to ingest, manage, and secure proprietary data better than anyone else, using RAG or deep fine-tuning to provide domain-specific reasoning that a generalized model cannot match.

3. Ecosystem Resilience

For society, this fragmentation breeds resilience. If one major AI provider suffers an outage, experiences a catastrophic failure, or changes its pricing structure drastically, businesses built on a multi-vendor strategy can weather the storm by redirecting traffic to a viable alternative quickly. This diversification reduces systemic risk across the technological foundation of the modern economy.

4. Implications for Society: Informed Consumption

On a societal level, the "shopping around" behavior means users are becoming more discerning about the quality and origin of the AI-generated output they consume. They are learning to recognize the subtle "tells" of different model personalities, pushing creators to be more transparent about the AI tools they employ.

Actionable Strategy in the Age of AI Unbundling

For any business looking to integrate AI deeply into its operations, the strategy must adapt to this fluid landscape:

- Benchmark Constantly: Institute regular, structured benchmarking tests where new and existing models are tested against your specific, high-value tasks. Do not rely on yesterday’s leader being today’s best fit.

- Prioritize Data Control: Invest heavily in the infrastructure surrounding your data (security, vectorization, access governance). This data layer is your unshakeable moat, regardless of which LLM sits on top of it.

- Adopt Open Standards Where Possible: Leverage open-source components for non-core tasks to maintain flexibility and reduce proprietary lock-in where licensing costs become prohibitive.

- Design for Modularity: Treat your AI stack like a microservices architecture. Ensure the front-end application layer is decoupled from the back-end model API to facilitate rapid component swapping.

The honeymoon period for generalized AI dominance is over. We have entered the phase of rigorous optimization, where the market rewards providers who offer specialized solutions, geographic compliance, and competitive pricing. ChatGPT remains the familiar starting point, but the race now belongs to the nimble, the specialized, and the strategically diversified.