The Great AI Stratification: Why Google's Tiered Image Models Signal the End of Monoliths

The world of generative Artificial Intelligence has long been dominated by the quest for the biggest, baddest model. Think massive parameter counts, unparalleled general knowledge, and, inevitably, astronomical operational costs. However, a recent announcement from Google regarding its new suite of image generation models, dubbed "Nano Banana," suggests a significant pivot. Instead of relying on a single, colossal engine for every task, Google is strategically segmenting its capabilities into tiers. Specifically, the emergence of a budget-friendly "Nano Banana 2" achieving 95% of the Pro model’s quality while incorporating web-search capability is not just a product update; it is a blueprint for the next phase of AI commercialization.

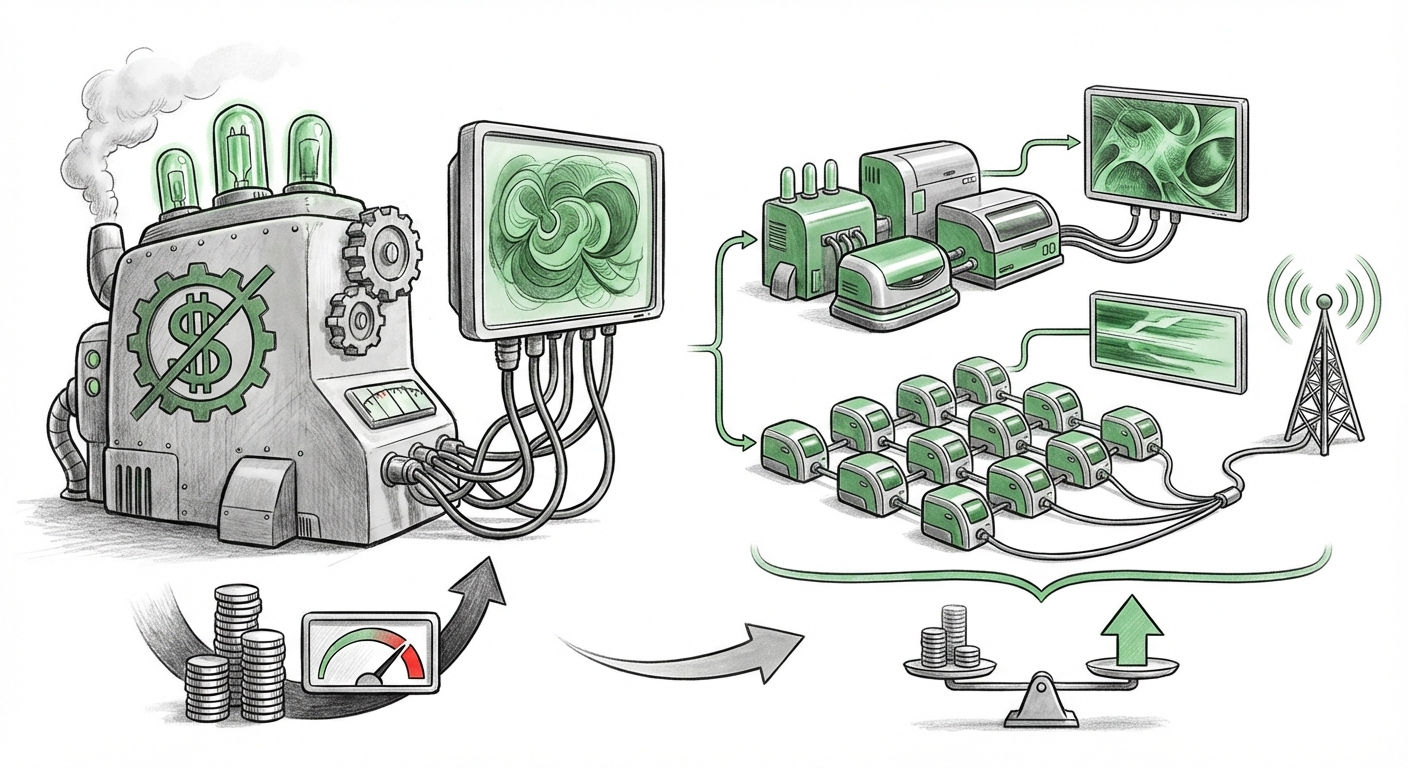

This development mirrors and reinforces a critical trend across the entire technology sector: the move away from monolithic, universal AI to **highly optimized, tiered deployment strategies** focused ruthlessly on efficiency, cost-effectiveness, and specialized task performance.

The Death of the One-Size-Fits-All AI

For years, the race was simple: bigger was better. The largest Language Models (LLMs) and diffusion models showed incredible promise because their scale unlocked emergent capabilities. But scale comes at a steep price, not just in training, but in inference—the cost of actually running the model every time a user asks a question or generates an image.

The introduction of Nano Banana 2 tells developers and business leaders that they no longer need to pay "Pro-level" GPU time for "good enough" results. This echoes industry research suggesting that smaller, purpose-built models can often match the performance of their gargantuan counterparts on specific tasks. When we look at comparable industry developments, we see this principle applied across modalities. For instance, highly optimized open-source diffusion models challenge the need for the largest proprietary image engines on benchmarks related to speed and quality trade-offs [Context from Search Query 1: Smaller diffusion models vs large image generation models performance].

For the technical audience (ML Engineers and CTOs), this means the conversation shifts from raw FLOPS to **efficiency ratios**. If Nano Banana 2 delivers 95% quality at perhaps 20% of the cost of the Pro version, the choice for high-volume applications becomes obvious. This stratification allows cloud providers and AI companies to maximize resource utilization by matching computational power precisely to user value.

The Economic Imperative: Cost Optimization as a Feature

The reality of running generative AI at global scale is unsustainable without cost management. Training a state-of-the-art model costs millions; running inference for billions of daily requests costs exponentially more over time. This intense operational expense is driving aggressive strategies for cloud cost optimization in generative AI infrastructure [Context from Search Query 3: Cloud cost optimization generative AI infrastructure].

Google’s tiered approach effectively democratizes high-quality generation by creating a high-margin, high-fidelity "Pro" tier for specialized creative work, and a highly scalable, low-cost "Standard" tier for everything else—customer support visuals, internal mockups, or standard web content. This is intelligent resource allocation. It ensures that mission-critical, novel tasks utilize the most capable (and expensive) resources, while the repetitive, high-volume tasks are handled by efficient workhorses.

The Power of Grounding: Knowledge in the Moment

Perhaps the most forward-thinking aspect of Nano Banana 2 is its built-in ability to search the web for reference images before generating output. This feature tackles one of the most persistent, dangerous flaws in current generative AI: hallucination and obsolescence.

Pre-trained models are locked into the data they learned from, which often stops months or years in the past. If you ask a base model to generate an image of a device released last week, it will fail or generate something completely inaccurate. By integrating web search capabilities—functionally applying Retrieval-Augmented Generation (RAG) principles to multimodal outputs—Google is enhancing relevance and reliability.

This concept of grounding is vital for the future of reliable AI [Context from Search Query 2: The role of web grounding in generative AI outputs].

- Factual Accuracy: For commercial use, seeing is believing, but seeing correctly is necessary. Grounding ensures the generated image reflects current reality.

- Reduced Hallucination: By using external, verifiable visual data as a template, the model is less likely to invent details that don't exist.

- Dynamic Content: It allows for instant generation of visuals related to breaking news or rapidly changing product lines, a capability that static models simply cannot offer.

This feature, placed in the cheaper model, suggests Google believes that relevance driven by current data is often more valuable to the average user than sheer aesthetic perfection.

Implications for the Business Landscape

These strategic tiers have profound implications across the tech ecosystem, affecting product strategy, competitive dynamics, and developer choice.

1. Product Strategy: Matching Fidelity to Need

Businesses relying on AI image generation must now conduct an "Fidelity Audit." Is your marketing team creating high-stakes, award-winning visuals that require the Pro tier? Or are your internal teams creating dozens of daily social media banners that only need 95% fidelity? The latter should be utilizing the cost-effective tier.

This allows companies to build tiered service offerings: a "Basic" subscription gets access to the grounded, fast Nano Banana 2, while a "Premium" subscription unlocks the full, nuanced power of the Pro model for specialized tasks.

2. Competitive Pressure and Open Source

Google’s move puts immediate pressure on rivals to clarify their own cost/performance matrix. If a major player offers near-parity at a fraction of the cost, proprietary advantage erodes quickly. This dynamic also fuels the ongoing conversation around proprietary vs. open-source strategies [Context from Search Query 4: Open source vs proprietary tiered AI models strategy]. Open-source communities thrive on finding efficient architectures; when major players validate the concept of smaller, highly capable models, it validates the open-source pursuit of efficiency.

3. The Future Workforce: Prompt Engineering Meets Resource Management

The skills required by the next generation of AI users are evolving. Prompt engineering remains crucial, but now it must be paired with resource management engineering. Users will need to know not just what to ask, but which model to ask it of. A novice user might default to the Pro model for every request, wasting budget, while an expert user will craft a prompt optimized for the cost-efficient, grounded Nano Banana 2.

Navigating the Stratified Future: Actionable Insights

How should organizations prepare for a world where AI performance is no longer a single number, but a spectrum of choices?

For Developers and Engineers: Embrace Efficiency Benchmarking

Stop optimizing solely for peak quality metrics (like FID scores). Start optimizing for utility-weighted performance. If a model needs to be fast and current (grounded), benchmark its real-world latency and data freshness, rather than its theoretical maximum aesthetic ceiling. Use the Nano Banana 2 as the new baseline for everyday tasks.

For Business Leaders: Implement Usage Governance

Establish clear policies on which model tier should be used for which function. Treat model deployment like cloud storage tiers: infrequently accessed, archive-level data goes to slow, cheap storage; active, mission-critical data goes to fast, expensive storage. In AI terms, use the efficient tier for high-volume, lower-stakes tasks, and reserve the Pro model for the truly novel challenges.

For Product Managers: Grounding is the New Feature

If your product relies on visual output, prioritize models that offer real-time grounding or RAG integration. The ability to generate an image based on information retrieved seconds ago is rapidly becoming a table-stakes feature, not a luxury add-on. This ensures your product remains relevant long after the model's initial training cutoff date.

Conclusion: Precision Over Power

Google’s delineation of the Nano Banana models underscores a necessary maturation of the generative AI industry. We are moving beyond the era of awe-inspiring, yet impractical, behemoths. The future belongs to the architect who can precisely engineer the right tool for the job—a tool that is fast, aware of the present moment via web grounding, and crucially, economically viable at scale.

This stratification signals a transition from pure R&D showcasing to robust, sustainable commercial deployment. The era of AI precision engineering has begun, demanding that developers and businesses alike become adept at navigating a complex menu of specialized AI services rather than simply relying on the single, most expensive option available.