Hume AI's TADA: The End of the Speed vs. Accuracy Trade-off in Voice Generation?

In the fast-moving world of Artificial Intelligence, breakthroughs often come with compromises. For years, generating high-quality synthetic speech meant accepting slower processing times, or alternatively, trading fluency for speed. Enter Hume AI’s recently open-sourced TADA model. This new speech generator isn't just claiming incremental improvements; it’s reporting being five times faster than its rivals while maintaining a critical feature: zero hallucinated words.

As an AI technology analyst, this development is far more than just a faster text-to-speech tool. It signals a fundamental shift in how we approach multimodal AI—the systems that handle different types of data, like text and sound, simultaneously. By open-sourcing TADA under the flexible MIT license, Hume AI has injected a powerful, high-performance component directly into the public domain, setting the stage for intense competition and rapid adoption across industries.

The Three Pillars of the TADA Revolution

To understand the significance of TADA, we must break down the three major claims made by Hume AI, each challenging an accepted industry constraint:

1. The Speed Barrier: Five Times Faster Processing

Latency—the delay between input and output—is the make-or-break factor for real-time applications. Think about talking to a voice assistant, participating in a live translation service, or even using real-time customer service bots. If the AI takes too long to respond, the conversation feels unnatural and frustrating. Previous high-fidelity speech models often relied on complex sequential steps, which added cumulative delay.

TADA’s reported 5x speed advantage suggests a radical architectural efficiency. When analyzing current industry trends, researchers are keenly focused on this area. We are constantly looking for evidence that new models can match the speed of legacy systems while delivering modern quality. This quest for near-instantaneous response is crucial for the next generation of immersive computing.

To truly validate this, the AI community is now searching for robust benchmarks comparing TADA against proprietary giants, examining the true "zero latency" capabilities necessary for seamless dialogue systems.

2. The Reliability Mandate: Zero Hallucinated Words

In the era of Large Language Models (LLMs), the term "hallucination" has become synonymous with factual inaccuracy—the model confidently stating falsehoods. While speech generation models don't hallucinate facts in the same way, they absolutely "hallucinate" audio artifacts. This can manifest as mumbled phonemes, distorted words, or generating speech that doesn't accurately reflect the input text, particularly when trying to mimic complex human emotion or accent.

Hume AI’s claim of zero hallucinated words implies an unprecedented level of fidelity and control over the output waveform. For high-stakes environments—like automated medical dictation, legal narration, or high-end voiceovers—reliability is non-negotiable. A single garbled word can render an entire passage unusable. This success shifts the focus in speech AI from merely sounding human to being flawlessly *accurate* to the instruction.

This raises critical questions about how "hallucination" is defined in audio processing and whether this breakthrough applies across emotional and linguistic variance, not just clean, neutral text.

3. The Architectural Leap: Synchronous Text and Audio Processing

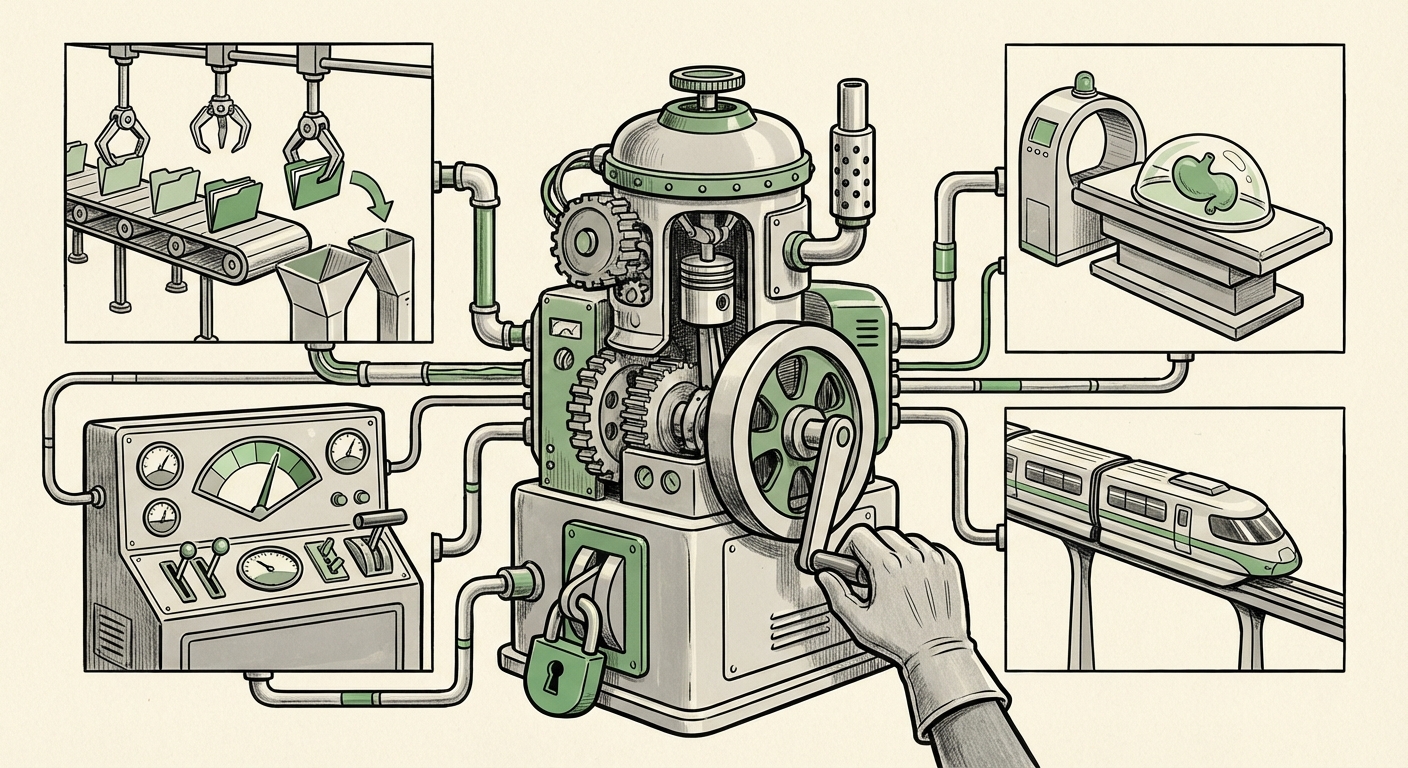

The secret sauce likely lies in TADA’s architecture. Older systems often used a pipeline: first, the text was processed into phonetic codes; second, those codes were converted into a spectral representation (like a blueprint for sound); and finally, a vocoder turned that blueprint into audible sound waves. Each step introduced potential error and delay.

TADA’s ability to process text and audio in sync suggests a unified, end-to-end multimodal architecture. This integration allows the model to build the sound structure simultaneously with understanding the text semantics, leading to both speed and accuracy. This movement toward unified processing is a major technological trend that we are seeing replicated in vision and language models alike.

The market is now looking to see if this synchronous approach can be universally applied to other multimodal tasks, potentially leading to faster, more coherent video and image generation.

Corroborating the Trend: The Broader AI Landscape

TADA’s arrival doesn't happen in a vacuum. It aligns perfectly with several converging trends reshaping the technology sector:

The Open-Source Tsunami

The decision to release TADA under the MIT license is a strategic play mirroring the disruption caused by foundational models like Meta’s LLaMA. By open-sourcing, Hume AI democratizes access to state-of-the-art technology, rapidly accelerating community innovation. Instead of large corporations hoarding proprietary engines, the global developer base can now integrate this high-speed, reliable voice capability directly into their products.

This accelerates the competitive pressure on closed ecosystems. If open-source solutions meet or exceed proprietary quality, businesses will rapidly migrate to solutions that offer greater control, lower operational costs, and zero vendor lock-in. We are seeing this dynamic play out across the entire generative AI stack, and TADA ensures voice technology is next.

The Search for Multimodal Coherence

The success of synchronous processing confirms that simply stacking models together is no longer optimal. The future belongs to truly integrated, multimodal foundational models. If a system can understand the context of an image, the emotion in a voice command, and the factual basis of text all at once, the resulting output will be far more nuanced and reliable. TADA’s architecture pushes the industry toward this goal in the audio domain.

Future Implications: Where TADA Will Reshape Industries

The combination of speed, reliability, and open access positions TADA as a disruptive force across several major sectors:

1. Customer Experience and Contact Centers

In the contact center, speed is king. High-speed, zero-error speech synthesis means advanced AI agents can handle complex, multi-turn conversations without frustrating pauses. Furthermore, applications like real-time voice translation, where delays are unacceptable, become truly viable for global customer service deployment.

2. Accessibility and Education

For assistive technologies, accuracy is paramount. Text-to-speech readers for the visually impaired must be flawless. TADA’s reliability ensures that digital content can be rendered audibly without introducing confusing errors. In education, hyper-fast narration allows for instant creation of audiobooks or interactive learning modules customized for individual student pacing.

3. Media Production and Gaming

Video game dialogue and dubbing for film often require massive studios and weeks of recording time. Fast, high-quality voice synthesis can drastically reduce post-production timelines. Imagine an independent game developer instantly generating thousands of lines of dialogue with guaranteed fidelity, eliminating the need for extensive studio correction passes.

Actionable Insights for Businesses

For organizations currently relying on third-party, proprietary voice APIs, Hume AI’s open-source release provides an immediate opportunity for strategic reassessment:

- Benchmark Immediately: CTOs and engineering leads should initiate pilots comparing TADA (or models built on its principles) against their current cloud provider’s voice service, focusing specifically on latency under peak load and error rates in complex text inputs.

- Reassess Cost Structures: Open-source adoption eliminates per-use API costs. For high-volume applications, adopting and self-hosting TADA could lead to significant long-term savings, provided the necessary in-house GPU infrastructure is available.

- Focus on Multimodal Integration: Developers should begin exploring how to leverage the synchronous processing philosophy within their own multimodal projects, understanding that decoupling text and audio processing steps is likely an outdated paradigm.

The Road Ahead: Maintaining Trust in the Age of Synthetic Sound

While TADA solves the technical hurdle of speed and fidelity, it inherently raises societal concerns around synthetic media. As voice generation becomes indistinguishable from human speech, faster and easier to use, the line between authentic communication and sophisticated deepfakes blurs further.

The very success in eliminating "hallucinations" means the generated voice will be even more convincing. This necessitates parallel investment in robust AI detection and provenance tools. For the ethical development of this technology to continue, the industry must prioritize watermarking and content authentication alongside performance improvements.

Hume AI’s TADA is more than just a faster engine; it is a blueprint for the next generation of practical, integrated AI systems. By demonstrating that performance, reliability, and openness can coexist, TADA is setting a new, higher bar for what we should expect from the tools that translate our digital intent into the real world of sound.