TADA: The Generative AI Breakthrough Redefining Speed, Fidelity, and Open Source in Speech Synthesis

The generative AI landscape is currently defined by a constant tug-of-war: achieving higher quality often demands exponentially more computational power, leading to slower inference times. This tension has long dictated which models are practical for real-time, high-volume commercial use. However, a recent release from Hume AI, the open-sourcing of their TADA speech generation model, is forcing a serious re-evaluation of this fundamental trade-off.

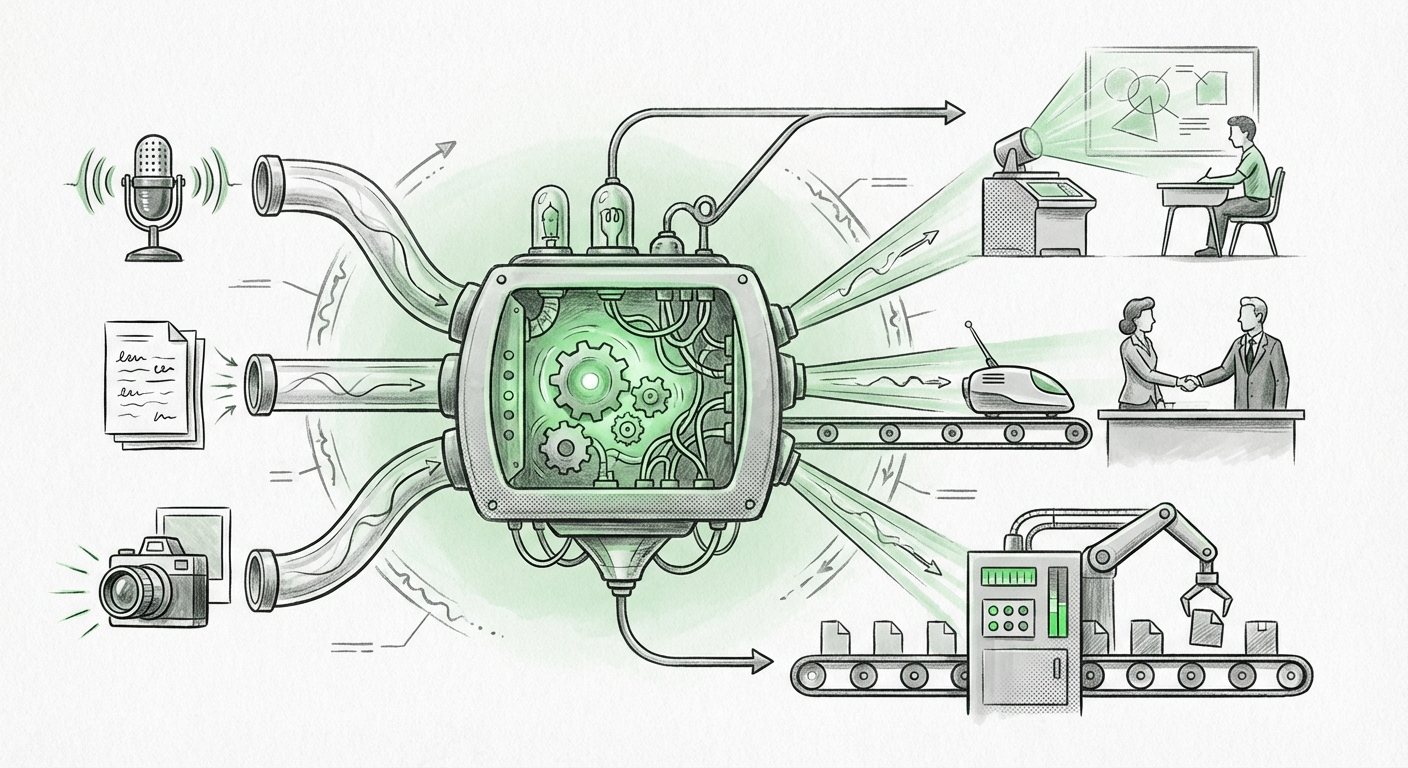

TADA is not just another Text-to-Speech (TTS) model. It arrives with three headline features that, when combined, signal a genuine inflection point in AI deployment: it is reportedly five times faster than its competitors, it processes text and audio in perfect sync (multimodality), and it achieves zero hallucinated words.

To understand the profound implications of TADA, we must look beyond the speech generation itself and analyze how these specific breakthroughs—speed, reliability, and open access—resonate across the entire spectrum of generative AI, from LLMs to robotics.

The Efficiency Frontier: Outpacing the State of the Art

In AI, speed translates directly into cost savings and real-time capability. A model that is five times faster means five times the throughput on the same hardware, or the ability to run on significantly less expensive infrastructure. This is crucial for any large-scale deployment.

Our analysis of the current state of the art text-to-speech benchmarks reveals that while quality has soared in recent years (producing near-indistinguishable human voices), the race for raw inference speed has often favored proprietary, heavily optimized closed systems. TADA claims to shatter these benchmarks by fundamentally rethinking how text is mapped to acoustic features. For developers, this shift means that high-fidelity audio output is no longer a luxury reserved for batch processing; it becomes viable for instantaneous customer service bots, dynamic content localization, and responsive assistive technologies.

Implication for Developers: Lowering the Barrier to Entry

For AI Engineers, TADA’s speed drastically reduces latency. Think of applications where a delay of even half a second ruins the experience—like a voice assistant answering a complex question while a user is driving. If TADA lives up to its claims, it sets a new industry standard, pushing competitors to optimize aggressively or risk falling behind in latency-sensitive markets.

The Reliability Mandate: Tackling Hallucinations in Audio

The most intriguing claim is "zero hallucinated words." In the context of Large Language Models (LLMs), hallucination—where the model generates plausible but factually incorrect or nonsensical text—is the single greatest impediment to enterprise adoption. When an LLM hallucinates, trust erodes instantly.

How does a speech model achieve "zero hallucinations"? This suggests that TADA might employ architectural designs heavily reliant on strict input-to-output mapping, perhaps relying less on vast, probabilistic language modeling for the final audio layer and more on deterministic or highly constrained synthesis processes. This focus on factual and lexical *integrity* is essential.

When we contextualize this against ongoing research into LLM hallucination reduction techniques, TADA offers a valuable case study. If LLM developers can draw inspiration from TADA’s structural approach to ensure lexical accuracy, we could see significant safety improvements across text generation tools soon.

Implication for Business: Trustworthy Voice Interfaces

For businesses integrating voice AI—whether for automated sales calls, complex IVR systems, or accessibility tools—reliability is non-negotiable. A voice bot that suddenly speaks gibberish or inserts words not present in the script is unusable. TADA offers a path toward voice interactions that are reliable enough for regulated industries like finance or healthcare, where generating spurious information is a compliance risk.

The Power of Synchronization: Next-Generation Multimodality

TADA’s ability to process text and audio "in sync" targets the complex area of real-time multimodal AI synchronization. Multimodality—the ability of an AI to process and generate data across different forms (text, image, sound)—is the next frontier. However, synchronization is notoriously difficult.

Consider tasks like dubbing video content. You need the text translation generated, matched perfectly to the speaker’s mouth movements and emotional cadence in the original audio track. If the text arrives milliseconds late, the resulting synthetic speech sounds robotic or out of place.

By optimizing the entire pipeline—from text token to acoustic output—for simultaneous processing, TADA dramatically simplifies the engineering required for high-quality, synchronized outputs. This moves beyond simple voice generation into true digital persona creation.

Implication for Media and VR/AR

This feature will be transformative for content creation. Imagine instant, high-quality translation services where the translated audio is delivered seamlessly integrated with the video, or for virtual reality environments where NPC dialogue must react instantly to user input without perceptible lag between listening and responding.

The Open-Source Strategy: Democratizing High Performance

Perhaps the most strategic element of the TADA release is the choice to open-source it under the permissive MIT license. This echoes the impact seen when major foundational models were released openly, fostering rapid community innovation.

Analyzing the impact of open-source speech generation models shows that proprietary models, while powerful, often stifle experimentation outside their specific ecosystem. By offering TADA openly, Hume AI is inviting global developers to stress-test, fine-tune, and integrate the technology immediately. This open approach accelerates its standardization and adoption across countless smaller applications that cannot afford expensive API calls to closed-source giants.

This move positions TADA as a new baseline. As noted in analyses of open-source trends in AI, when a model offers significant performance advantages (like 5x speed) while remaining freely accessible, it forces incumbent players to either drop their prices, accelerate their R&D, or risk losing market share in the mid-to-low tier deployment space.

Actionable Insight: Building on the Foundation

For the open-source community, this is a call to action. Developers can now build next-generation voice applications—custom synthesis engines, specialized voice cloning tools, or complex conversational agents—using a fast, reliable core model without restrictive licensing concerns.

Synthesizing the Trends: The Future of AI Delivery

TADA’s release is a microcosm of where industrial AI is heading. We are moving away from monolithic, slow models that prioritize brute-force accuracy at any cost, toward specialized, efficient architectures designed for specific real-world constraints.

- Specialization over Generalization (in Inference): While LLMs are vast generalists, TADA shows that highly optimized, specialized models focused on a single task (like high-fidelity, verifiable speech) can outperform generalists when speed and accuracy matter most.

- Reliability as a Feature: "Zero hallucinations" signals that the industry is maturing past novelty toward utility. For AI to be integrated into critical infrastructure, factual grounding and predictable behavior must be core architectural requirements, not just afterthoughts.

- The Open-Source Velocity Multiplier: Open sourcing high-performance components dramatically increases the rate of technological diffusion. It lowers the cost floor for innovation, allowing startups and researchers globally to deploy near-SOTA technology immediately.

What does this mean practically? In the near future, we can expect voice interfaces to become significantly more pervasive because the cost and speed barriers have dropped. Customer interactions will feel less frustratingly delayed. Educational tools will be able to offer personalized audio instruction instantly. The uncanny valley in digital voice actors will shrink due to better synchronization and fidelity.

The ultimate implication is that reliability and speed, unlocked by architectural innovation like that seen in TADA, are the true currencies of the next wave of AI deployment. As this ethos spreads from speech synthesis into other generative domains, we are entering an era where powerful AI is not just possible, but practical, affordable, and trustworthy enough for every corner of the digital economy.