The Great AI Consolidation: Why Tech Giants Are Shedding Jobs to Fund Their $600 Billion Bet

The headlines are jarring: Massive layoffs at one of the world's most valuable technology companies, driven not by immediate economic collapse, but by a strategic pivot toward a singular, extremely expensive goal—mastering Artificial Intelligence. Reports suggesting Meta is preparing to cut significant portions of its workforce to finance a multi-hundred-billion-dollar AI investment paints a stark picture of the current technological landscape.

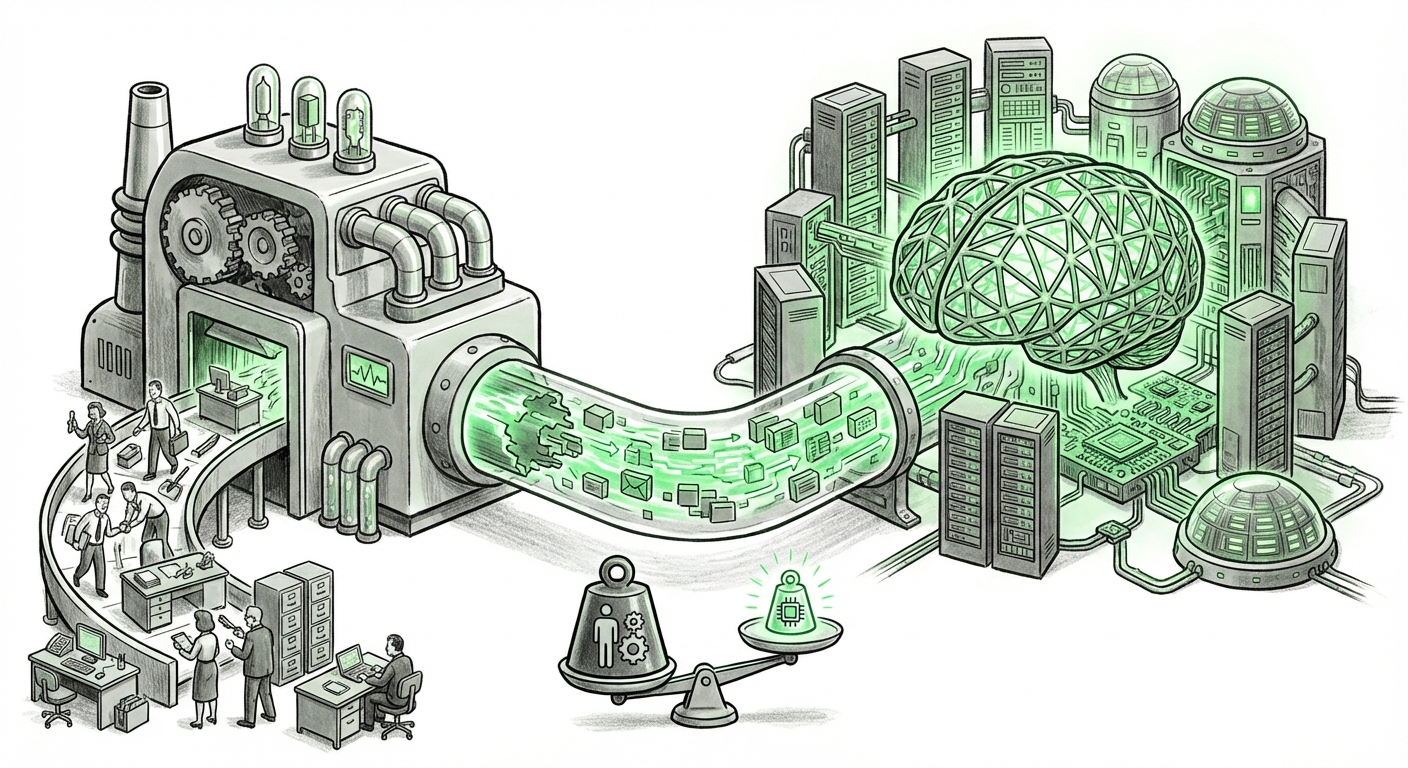

This isn't just corporate housekeeping; it is the financial reality of the AI Arms Race setting in. Achieving true, leading-edge Generative AI capabilities—the kind that redefines search, content creation, and human-computer interaction—demands astronomical capital expenditure (CapEx) and operational expenditure (OpEx). For Big Tech, this means an unavoidable triage: current business units must be trimmed to fuel the future machine.

The Tectonic Shift: From Parallel Innovation to Singular Focus

For years, tech giants thrived on running multiple, often overlapping, innovative tracks. They invested heavily in hardware, metaverse concepts, advertising tech, and consumer social platforms simultaneously. This era of broad, parallel innovation is now rapidly concluding. The sheer cost and competitive urgency of foundational AI models—the Large Language Models (LLMs) that power tools like ChatGPT and its competitors—demand an unprecedented concentration of resources.

To understand the gravity of this move, we must look beyond the internal news cycle and examine the scale of the necessary investment. Our contextual research points to three undeniable forces driving this restructuring:

- The Hardware Hunger: Training next-generation LLMs requires specialized, scarce computing power, primarily high-end GPUs (like NVIDIA’s H100 chips). Acquiring these chips costs billions. Furthermore, companies are now designing their own custom silicon (ASICs) to reduce reliance on external vendors and optimize performance, another multi-year, multi-billion-dollar undertaking.

- The Talent Concentration: The top 1% of AI researchers and engineers are critical bottlenecks. To secure them, companies must offer premium compensation, further concentrating salary expenditure in specific departments while legacy teams face scrutiny.

- The Operational Cost Shock: It's not just training; it’s *running* the models. Every query sent to an AI assistant costs significant computing cycles. Scaling this to billions of daily users turns CapEx into an ongoing, massive OpEx problem that must be offset elsewhere.

This financial pressure explains why workforce reductions—which are always politically and culturally difficult—are being framed as a necessary precursor to AI victory. We are witnessing a corporate restructuring mandate where AI proficiency is the new business requirement for survival.

Quantifying the Bet: Trillions in Infrastructure Required

When we investigate the scale of AI spending through financial analysis (Query 1), the picture clarifies. While the specific $600 billion figure cited regarding Meta’s ambition might be a blend of long-term investment projections, recent earnings calls from tech leaders consistently emphasize AI infrastructure as the primary capital focus. For example, Microsoft and Google have been explicitly signaling massive, sustained infrastructure investments for years, now supercharged by generative AI demands.

For the average observer, thinking in terms of billions is difficult. Imagine this: a single, state-of-the-art LLM training run can consume more electricity than a small city uses in a day and cost over $100 million in compute time alone. Meta, aiming to build models potentially larger or more specialized than current public offerings, faces costs that scale exponentially with ambition.

Consequently, every non-AI dollar spent becomes a liability. Marketing teams focusing on non-core legacy products, administrative overhead, or tangential hardware projects are now being scrutinized through the harsh lens of **Return on AI Investment (ROAI)**. If a division doesn't directly support the immediate path to achieving AI superiority, its budget—and its personnel—are reassigned or eliminated.

The Cost of Inference: Operationalizing AI

The technical deep dives into LLM economics (Query 3) reveal that the one-time cost of training the model is often dwarfed by the ongoing cost of making it useful for everyone. This is the inference cost. If Meta deploys a highly capable, personalized AI across its family of apps (Facebook, Instagram, WhatsApp), the daily transactional cost skyrockets. This sustained expense is the true financial moat being built—and it requires unparalleled operational efficiency, which often means fewer people managing more automated, AI-driven systems.

The Industry-Wide Trend: AI-First is Layoff-First

Meta’s reported actions are not an anomaly; they are becoming the visible tip of an industry iceberg. By searching for broader layoff patterns linked to AI pivots (Query 2), we find confirmation that this is a systemic reset. Companies across the spectrum, from social media giants to cloud providers, are cutting roles that involve manual content curation, legacy system maintenance, or secondary research functions.

The message to employees is clear: efficiency through automation, funded by headcount reduction, is the new prerequisite for holding a job in Big Tech. This impacts job seekers and current employees profoundly:

- For Job Seekers: The demand is no longer for general software engineers; it is for MLOps specialists, AI infrastructure architects, and prompt engineers who understand how to interface with these multi-billion dollar models effectively.

- For Businesses: If you are a vendor or a smaller tech firm dependent on older digital advertising models or non-AI enterprise software, expect the giants to internalize those functions using their new, highly optimized AI stacks, pushing competitors out.

Future Implications: What This Means for AI Deployment

This financial consolidation has significant implications for how AI will develop and integrate into our lives:

1. The Rise of Sovereign AI Ecosystems

When the cost of entry is this high, the gap between the "AI Haves" (Meta, Google, Microsoft, Amazon) and the "AI Have-Nots" widens dramatically. We will see fewer truly foundational models developed outside these walled gardens. This concentration risks creating oligopolies in intelligence, where the rules, biases, and access points for the most powerful tools are controlled by a handful of entities.

2. AI as the Primary Product Layer

If Meta is spending vast sums, the monetization focus shifts entirely. Expect advertising, social engagement, and user retention tools to become hyper-personalized, driven entirely by these new models. The traditional social feed will likely be replaced by an AI-curated, generative experience—a metaverse layer that is financially viable because it runs more efficiently, even if it requires more upfront capital.

3. The Ethical Cost of Efficiency

Workforce cuts often target areas that might seem less glamorous but are crucial for safety and diversity, such as content moderation or policy review. If these human safety nets are reduced to offset hardware costs, the AI systems, which are inherently prone to hallucination or bias, will be deployed at scale with potentially less human oversight. This heightens the risk of societal impact from unchecked algorithmic decisions.

Actionable Insights: Navigating the AI Restructuring

For businesses and technologists looking to thrive in this ruthlessly focused environment, the path forward requires immediate adaptation:

For Business Leaders: Audit for AI Utility

If you are not already doing so, conduct a rigorous audit of every role and project. Ask: Does this function directly contribute to improving our core product using AI, or does it maintain a legacy system that AI will soon automate? Reallocate budgets immediately toward cloud infrastructure contracts focused on AI workloads and upskilling existing staff in prompt engineering and model monitoring.

For Technologists: Become the Bridge

Generalist coding skills are becoming commoditized by AI assistants. The highest value lies in the intersection of disciplines. Focus on MLOps (Machine Learning Operations)—the ability to deploy, monitor, and maintain these massive models reliably. This is the engineering skill that translates massive CapEx into profitable OpEx.

For Policy Makers: Address Concentration Risk

The rapid concentration of AI infrastructure power in a few entities demands regulatory attention, not just on data privacy, but on market access. If the core intelligence layer is controlled by three companies, the future of digital commerce and communication rests on their strategic decisions, not market competition.

Conclusion: The New Reality of Compute Power

The reported layoffs at Meta are a powerful signal that the honeymoon phase of generative AI development is over. The market is now demanding that the technology prove its financial worth through disciplined, massive resource allocation. This isn't a temporary dip; it is the forging of the new technological structure. The future of AI will be built by organizations willing to make these hard trade-offs—shedding the past structures to survive the present financial demands of the compute frontier. Only those who can afford the sustained, multi-billion-dollar race to the next LLM iteration will set the technological agenda for the next decade.