The End of Physical Training? How Simulation-Only Robotics is Redefining AI Deployment

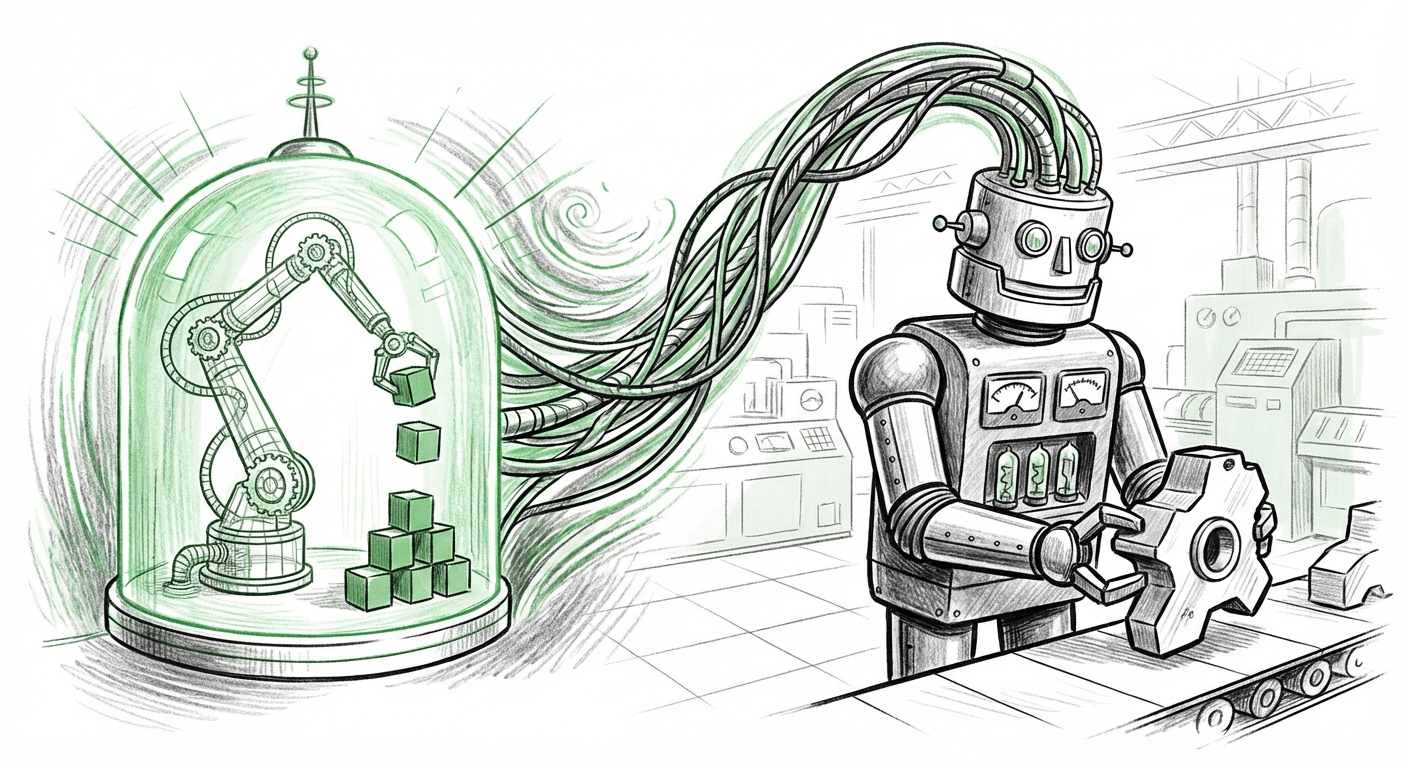

A quiet revolution is brewing in robotics, one that promises to shatter the physical constraints that have long slowed the deployment of intelligent machines. Recent breakthroughs, highlighted by the work from AI2 (Allen Institute for AI), suggest that we may be on the cusp of a new era: **Robots trained entirely in virtual worlds that flawlessly execute tasks in the real world, without ever requiring physical data collection.**

For years, the journey from an algorithm in a lab to a functioning robot on a factory floor was paved with mountains of expensive, slow, and repetitive real-world data gathering. If a robot needed to learn how to grasp a new object, engineers often had to physically present thousands of variations of that object, risking damage to the hardware and wasting valuable time. The recent news that AI2 has successfully trained models exclusively in simulation validates a growing industry hypothesis: the physical world is becoming optional for initial robotic training.

The "Sim-to-Real" Conundrum: Why Simulation Training Matters

Imagine teaching a child to ride a bike. In the traditional robotics world, this meant strapping the child onto a real bike and letting them crash thousands of times until they learned balance. The "Sim-to-Real" approach is like using a hyper-realistic video game where the child can crash infinitely without injury or expense. The challenge has always been transferring that virtual knowledge to the real world.

The core problem lies in the "reality gap." Simulations, no matter how good, struggle to perfectly replicate complex real-world physics—the exact friction of a surface, subtle changes in lighting, sensor noise, or material elasticity. When a model trained in simulation fails in reality, it’s because the simulation didn't capture these crucial details.

AI2’s success signals that researchers are finally finding ways to either make simulations realistic enough, or, more powerfully, make the *AI models robust enough* to handle the inevitable discrepancies between the virtual and the physical.

Corroborating the Trend: The Technology Under the Hood

This breakthrough isn't happening in a vacuum. It relies on significant, concurrent advancements in simulation platforms and academic rigor. To understand how AI2 achieved this, we must look at the enabling technologies:

1. High-Fidelity Simulation Environments

The realism required for effective simulation training pushes hardware and software to their limits. Platforms like NVIDIA’s Isaac Sim are becoming central to this research. These simulators aren't just rendering pretty pictures; they integrate sophisticated physics engines, advanced sensor models (like LiDAR and cameras), and often incorporate techniques like Neural Radiance Fields (NeRFs) to create photorealistic yet physics-accurate digital twins of real environments. When a simulator can accurately model how light reflects off polished metal or how a soft object deforms upon grasping, the resulting AI model inherits that accuracy.

This technological maturity means the *cost* of creating a physically accurate training environment is becoming dramatically lower than the *cost* of physically collecting the necessary data.

2. The Mathematics of Transfer Learning

Beyond realism, the theoretical underpinnings of Sim-to-Real transfer learning are maturing. Academic research confirms this is a primary focus area. Researchers are developing algorithms that teach models to be deliberately *agnostic* to small environmental differences. For example, a model might be trained to recognize features that are invariant across both the synthetic world and the real world, effectively teaching it to generalize rather than memorize the simulation's specific quirks.

Work by major labs in this area confirms that massive scale simulation—running billions of simulated interactions—can create generalizable skills that are resilient to the "noise" introduced when moving to hardware.

The Commercial Earthquake: Lowering Barriers to Adoption

If AI2’s findings hold up at scale, the economic implications for robotics are staggering. Data collection is arguably the single largest bottleneck in deploying new robotic applications today.

The Economics of Synthetic Data

We must analyze the stark contrast between physical and synthetic data collection. Consider a logistics company needing a robot to pick fragile boxes from a poorly organized shelf:

- Physical Collection: Requires engineers, downtime on the production line, dozens of physical boxes, potential hardware damage from repeated failed grabs, and specialized data labeling teams to annotate every frame. This process takes months and costs hundreds of thousands of dollars per skill.

- Synthetic Collection: The environment (the shelf, the lighting, the boxes) is modeled digitally. The AI can attempt the grab millions of times overnight, across thousands of different lighting conditions (dawn, noon, dusk, harsh factory lights), all without moving a single physical item or risking equipment failure.

As industry reports suggest, the race is on for companies to embrace synthetic data as the new competitive advantage. If the deployment time for a new robotic task shrinks from nine months to nine days, the competitive edge is transformative.

Democratizing Robotics

Historically, only large corporations with deep pockets could afford the R&D required to deploy cutting-edge robotic systems. Training solely in simulation lowers the required upfront capital investment significantly. Smaller startups, specialized manufacturing firms, and even academic groups can now leverage high-powered cloud-based simulators without needing massive physical testing labs.

This democratization means innovation will accelerate across niche sectors—from highly customized assembly lines to intricate agricultural tasks—that were previously deemed too economically unviable for physical robotics research.

The Road Ahead: Embodied AI and the Digital Twin Ecosystem

The ability to train robots in simulation is not an endpoint; it is the launchpad for the next major phase of AI: Embodied Intelligence.

The Rise of General Purpose Robots

Current robots are often brittle; they do one thing well. If you change the environment slightly, they break. By training models in vast, diverse simulations, researchers are moving toward robots with *generalizable* intelligence—agents that understand concepts like gravity, texture, and spatial reasoning, much like a human does.

If a robot learns "how to grip" in a simulation that models 10,000 different objects, it stands a much better chance of successfully gripping a novel object it encounters in the real world.

Synergy with Digital Twins

This trend perfectly aligns with the concept of the Industrial Digital Twin. A digital twin is a virtual replica of a physical asset, factory, or entire city grid. If AI models can be trained and validated entirely within the digital twin environment before being deployed, companies gain an unprecedented level of predictive control.

For instance, a company could test a new robotic workflow in its digital twin simulation (powered by the AI2-style training methodology). If the simulation shows a 5% efficiency gain, that result is reliable enough to authorize a factory floor rollout—no costly physical A/B testing required.

Actionable Insights for Technology Leaders

For leaders in manufacturing, logistics, and technology development, the shift toward simulation-first training demands immediate strategic attention. Here are crucial steps to prepare:

- Invest in Simulation Infrastructure: Determine which high-fidelity simulation platforms (like those leveraging GPU acceleration) are necessary to replicate your most complex operational environments. Start modeling your core tasks digitally now.

- Re-evaluate Data Strategy: Shift budget allocation away from massive physical data collection efforts toward acquiring or building high-quality synthetic data generation pipelines. Focus on making synthetic data *diverse* and *realistic*, not just plentiful.

- Prioritize Generalization over Specificity: When designing next-generation control algorithms, demand that vendors demonstrate robust Sim-to-Real transfer capabilities. A model trained only on specific real-world data is inherently fragile compared to one trained via massive simulation.

- Upskill in Digital Engineering: Your robotics teams must become proficient in simulation software, physics modeling, and digital twin management. The future robotics engineer is as much a software and visualization expert as they are a mechanical one.

Conclusion: The Reality of Virtual Training

The recent announcements surrounding simulation-only robotics training are not merely incremental improvements; they represent a fundamental shift in the development lifecycle of intelligent machines. By severing the dependency on expensive, slow, real-world iteration, AI is set to flood physical domains at an unprecedented velocity.

The next decade will see robots deployed faster, cheaper, and more widely across industries that previously found the complexity too daunting. We are moving toward a future where the constraints on robotic intelligence are no longer physical, but purely computational—and that changes everything about how we build and interact with the automated world.