The Simulation Revolution: How Zero Real-World Data is Unlocking Next-Gen Robotics

For decades, the dream of truly versatile, intelligent robots has been hampered by a single, massive bottleneck: data collection. Teaching a robot to reliably pick up a novel object, navigate a cluttered factory floor, or assist in a dynamic household setting required thousands of physical trials—trials that are slow, expensive, and sometimes dangerous to conduct in the real world. Now, a major announcement from the Allen Institute for AI (Ai2) suggests we might be close to sidestepping this reality entirely.

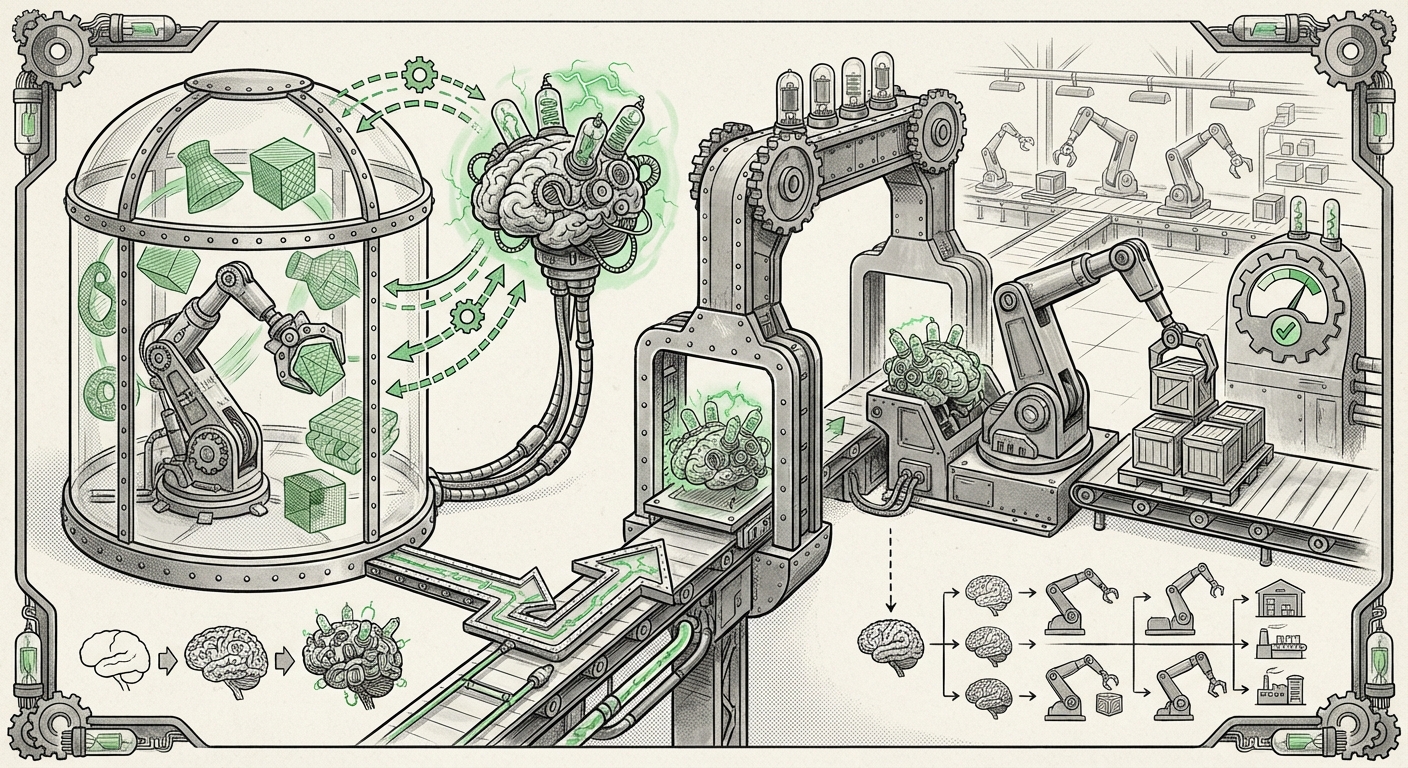

Ai2 has demonstrated the ability to train complex robotic models using only simulated environments, deploying them successfully into the real world without any traditional physical training data. This isn't just an incremental improvement; it’s a paradigm shift in Embodied AI. As an AI analyst, I see this as a critical inflection point that will redefine the speed, cost, and accessibility of robotics development.

The Sim-to-Real Leap: Why This Matters

The process of teaching a machine learning model to perform a task is largely about feeding it data. For robots, this data involves sensory input (what the camera sees) mapped to motor output (what the joints do). Previously, if you wanted a robot to handle 100 different kinds of boxes, you needed a person (or the robot itself) to manipulate those 100 boxes hundreds of times.

The concept of **Sim-to-Real transfer** seeks to move this training into a virtual sandbox. A simulator allows engineers to run millions of trials in minutes. The challenge, however, has always been the "reality gap." A virtual ball often behaves slightly differently than a real ball, and a simulated texture doesn't perfectly match reality. A robot trained purely in simulation often fails spectacularly when faced with the subtle noise and friction of the physical world.

Ai2's success implies they have dramatically closed this gap. This means robots can now be developed faster and cheaper than ever before. Imagine designing a new warehouse automation task: instead of spending six months physically setting up and testing, engineers can now prototype, train, and validate the entire system digitally, possibly in a matter of days.

The Technical Secret: Mastering the Virtual World

How is this zero-data approach actually achieved? It relies heavily on advanced simulation techniques that make the digital environment rich enough to cover real-world uncertainty. The key here is moving beyond simple visual realism to deep physical and stochastic modeling. If we look into the technical underpinnings:

- Domain Randomization: This technique, often essential for bridging the gap, involves deliberately making the simulation "messy." Instead of training on one perfect virtual kitchen, the model trains across thousands of randomized versions: different lighting, varying camera positions, slightly different object weights, and randomized visual textures. This forces the AI model to learn the *essential features* of the task (e.g., "grasp the handle") rather than memorizing the specific look of the training environment. Researchers investigating these methods often focus on making the training robust enough to handle significant variance [Illustrative Example of Sim-to-Real Concepts].

- Physics Fidelity: Modern simulators (like those championed by players like NVIDIA, whose Isaac Sim platform is central to this trend) are increasingly accurate in modeling friction, gravity, and soft-body dynamics. If the simulated physics perfectly matches the real-world physics engine, the transfer becomes seamless.

For the technical audience—the AI engineers and researchers—this development signals that simulation fidelity and domain randomization techniques have reached a level of maturity previously thought years away. We are moving from simulation as a *tool for visualization* to simulation as the *primary training ground*.

The Broader Ecosystem: Competition and Foundation Models

Ai2's breakthrough isn't happening in isolation. It is part of a massive industry pivot toward making simulation the backbone of embodied intelligence. The commercial landscape is intensely competitive, driving rapid innovation:

The Platform War in Simulation

Major technology players are not just watching; they are building the infrastructure necessary for this revolution. Companies like NVIDIA, with their powerful GPU acceleration and integrated physics tools (like Isaac Sim), and Meta, focused on creating massive, complex virtual worlds for training embodied agents, are setting the standard for synthetic data generation [Meta's Investment in Simulation for Robotics]. For investors and strategists, watching which platform providers win the "simulation wars" is crucial, as they will dictate the deployment landscape for future robotics.

The Rise of Generalist Robots via Foundation Models

The reason these simulation environments are so effective now is likely tied to the underlying AI architecture. We are seeing the application of **Foundation Models**—massive, general-purpose AI models trained on vast datasets—to robotics.

If a model has already learned general concepts like object recognition, spatial reasoning, and grasping mechanics from internet-scale image and text data, training it in a simulator becomes less about learning *from scratch* and more about *fine-tuning* a highly capable pre-existing brain for the physical world. Projects like Google’s Robotics Transformer (RT-2) exemplify this, demonstrating how large language models can be adapted to control physical systems [Google's RT-2 Robotics Transformer].

This connection between large language/vision models and physical action is the true engine driving the success of zero-data simulation training. The model isn't just learning to move the arm; it’s learning to interpret complex, real-world instructions within a simulated physics sandbox.

Practical Implications: From Factory Floor to Living Room

The ability to train robots without physical data collection moves robotics development from an *engineering* challenge to a *software* challenge. The implications ripple across every industry relying on automation.

1. Manufacturing and Logistics: Hyper-Scalability

Today, deploying a new robotic arm for a novel task often requires days or weeks of on-site tuning and testing. With simulation-first training, companies can deploy thousands of virtual workers to test process changes, stress-test supply chains, and optimize layout before a single piece of real hardware is moved. This drastically reduces downtime and capital expenditure (CapEx).

2. Consumer Robotics and Assistive Tech: Democratization

The high cost of data collection has kept advanced general-purpose robots expensive and niche. If training costs plummet, the barrier to entry for building robots that can handle household chores or assist the elderly drops dramatically. We could see an explosion in specialized, yet versatile, home robots.

3. Faster Iteration Cycles

The cycle time for improvement shrinks. An issue discovered in deployment can be patched in the simulator overnight and the improved model redeployed the next morning, bypassing the need to schedule physical retraining sessions. This continuous, rapid iteration is the hallmark of successful software platforms, and robotics is finally joining that club.

The Essential Caution: Validating the Unseen

While the excitement is warranted, as analysts, we must address the inherent risks. When a model skips real-world data collection, it also skips real-world validation checkpoints. This raises profound questions about safety and robustness.

The Validation Crisis

If the robot has only seen perfectly rendered virtual environments, how do we guarantee it won't react unexpectedly when confronted with a slightly sticky surface, a glare off a window, or an unexpected piece of debris? The focus now must shift heavily toward rigorous safety protocols for deployment.

Research into safety validation for sim-to-real systems becomes paramount. This involves creating mathematical proofs or testing suites that actively search for failure modes within the simulation that might translate to danger in reality [IEEE on Safety in Sim-to-Real Systems]. For robotics operating outside controlled environments (like autonomous driving or assistive care), regulatory bodies will need new frameworks to certify AI trained entirely in a synthetic world.

For technical teams, this means new benchmarks focusing not just on average success rate, but on the *distribution* of possible outcomes. If the simulation is the primary teacher, the simulator itself must be audited as rigorously as the AI model it produces.

Actionable Insights for Leaders

This trend demands proactive strategy from technology leaders:

- Invest in Simulation Infrastructure: If your company plans to use custom automation in the next three years, identify which simulation platforms (digital twin technology) best align with your hardware and begin building internal expertise now. This is the new factory floor.

- Re-skill Your Workforce: The demand for roboticists who are also expert simulation engineers (familiar with physics engines, domain randomization, and massive synthetic data pipelines) will skyrocket. Training current staff in these digital tools is non-negotiable.

- Prioritize "Safe-Break" Boundaries: For early deployments, mandate that robots trained purely in simulation operate initially in constrained environments or with a human supervisor ready to take over. Use the first few weeks of real-world operation not just to prove success, but specifically to uncover simulation blind spots.

The successful training of robotic models entirely in simulation marks the true industrialization of AI for physical tasks. It signals an era where the speed of innovation in the digital realm can finally translate directly and rapidly into real-world capability, ushering in a level of automation previously confined to science fiction.