Elon Musk's xAI Rebuild: Why Foundational Flaws Force AI Giants to Start Over

In the hyper-speed world of artificial intelligence development, speed is often equated with survival. The race to build the next great foundational model—the core engine powering products like Grok or ChatGPT—is intense. However, a recent admission from Elon Musk regarding his AI venture, xAI, has sent ripples through the industry: the company "was not built right the first time around," necessitating a full architectural restructuring.

This admission is far more than a simple corporate announcement; it serves as a critical reality check for the entire AI ecosystem. It tells us that the initial choices made in the foundational layer—the very bedrock of an AI—are monumentally important, and cutting corners in the quest for immediate deployment can lead to costly, time-consuming pivots later on. To understand the scope of this pivot, we must analyze the competitive landscape, the inherent difficulties of building frontier models, and the technological shifts that might be forcing xAI’s hand.

The Performance Deficit: Why Foundations Matter

When a leader of a well-funded, high-profile AI company states the initial build was faulty, the immediate question is: faulty compared to what? The context provided by competitors is crucial. The AI landscape is not static; models like GPT-4 and Claude 3 are constantly setting new standards for reasoning, complex instruction following, and coherence.

Benchmarking the Gap

Musk’s admission suggests that Grok, in its current iteration, failed to reach the necessary performance parity required to challenge the established leaders. If we look at comparative analyses (analogous to search query 1: "Grok performance review vs GPT-4 and Claude 3"), we often find that while models might excel in niche areas—such as Grok’s early advantage in leveraging real-time X (Twitter) data—they lag significantly in core cognitive tasks like deep analytical reasoning or complex coding.

For AI developers and enthusiasts, this is telling: raw data access is not enough. The underlying transformer architecture, the data cleaning process, and the alignment training must be intrinsically sound. A weak foundation means that even feeding the model more data or more computing power provides diminishing returns. The only solution, as Musk suggests, is to rebuild the core structure.

The Systemic Challenge: Engineering Debt in AI

Musk’s experience at xAI is not unique; it is a magnified example of a systemic problem facing almost every startup attempting to build a frontier model from scratch (Contextual search query 2). The pressure to demonstrate progress—to attract investment, secure partnerships, and satisfy public curiosity—pushes teams to prioritize rapid deployment over meticulous, long-term architectural planning.

The Cost of Speed Over Soundness

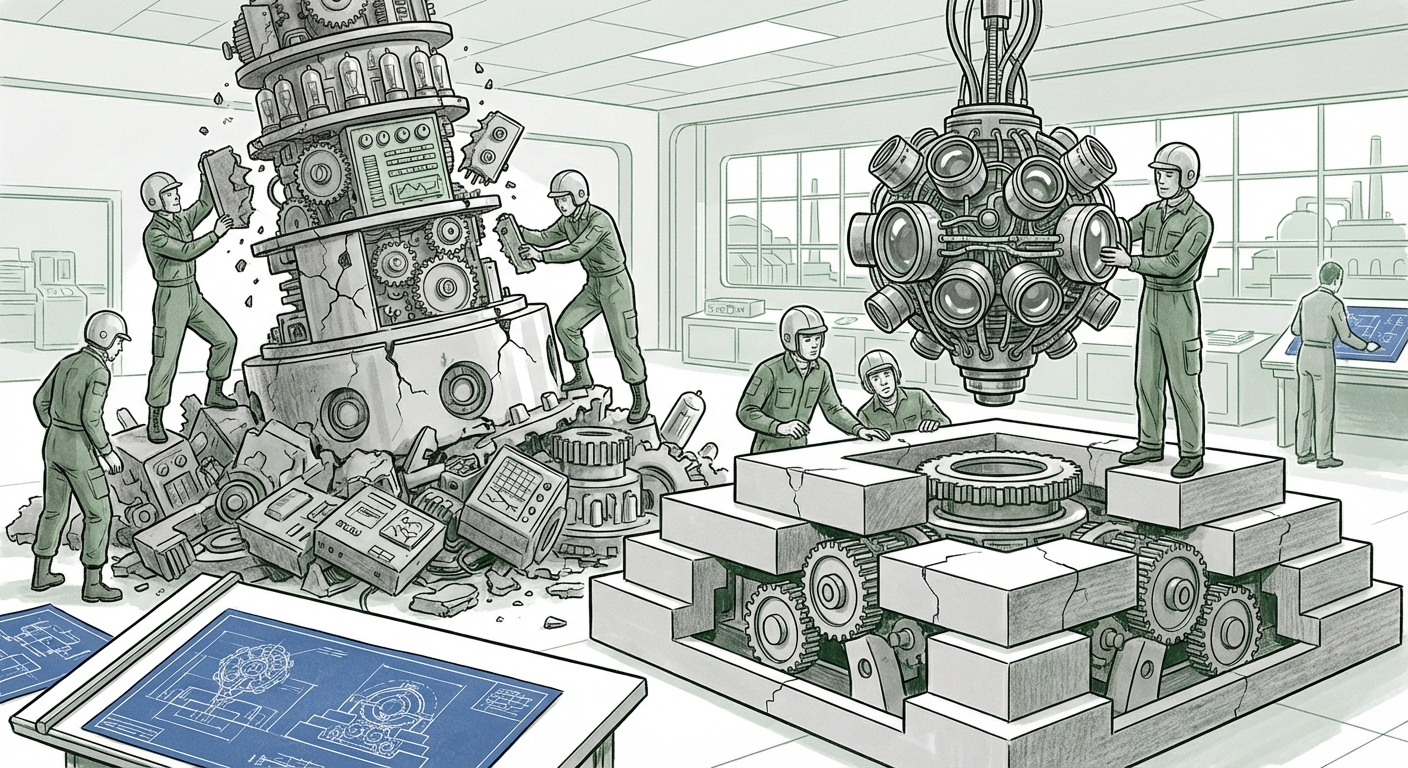

Imagine building a skyscraper. If you rush the concrete curing or use substandard steel in the foundational columns, you might get the first ten floors up quickly. But when you try to add the 50th floor, the entire structure becomes unstable. In AI, this "engineering debt" manifests as:

- Training Inefficiency: The original model architecture might require excessive computational resources (FLOPs) to achieve a result that a better-designed model could achieve more cheaply.

- Alignment Failures: Flaws in the initial safety training (RLHF—Reinforcement Learning from Human Feedback) become deeply embedded, making it extremely difficult and expensive to correct biases or harmful outputs later on.

- Scalability Ceilings: The architecture might hit a hard limit on how many parameters it can effectively handle before performance degrades.

For technology strategists, this underscores a major trend: achieving true AGI (Artificial General Intelligence) requires architectural discipline, not just brute force computation. The industry is learning that the initial $100 million spent on a prototype model often needs to be entirely written off when the true long-term architecture becomes apparent.

The Talent Signal: Execution Through Reorganization

A "full restructuring" is rarely just about rewriting code; it's fundamentally about reorganizing human capital. By examining personnel shifts (search query 3: "Elon Musk xAI hiring spree restructuring"), we can glean Musk’s strategy for the relaunch. Is he aggressively seeking world-class experts in specific sub-fields, like model distillation or synthetic data generation?

Defining the New Core Competency

If Musk is indeed poaching top researchers from established labs, it signals that the rebuild isn't just a refactoring of Grok 1.0; it points toward adopting an entirely new methodological approach. This focus on talent is crucial for businesses looking to hire or partner in the AI space. It confirms that the differentiator in the next cycle will be the quality and specific expertise of the engineering team building the core infrastructure, not just the availability of cloud computing resources.

This restructuring also impacts the very nature of competition. When a major player admits failure and rebuilds, it temporarily removes them from the immediate competitive race, giving rivals a brief window to consolidate their lead. However, it also signals Musk’s unwavering commitment to catching up, ensuring the AI arms race remains fierce.

The Next Frontier: Embracing Multimodality

Perhaps the most significant driver behind the need for a foundational restart is the industry’s inexorable shift toward multimodality. The world is not purely text-based. Future useful AI systems must natively understand images, video, audio, and sensor data simultaneously—not as separate add-ons, but as integrated inputs woven into the primary architecture.

Beyond Text: The Integration Imperative

If xAI’s initial Grok model was optimized heavily for language processing via X data, it may lack the necessary architectural framework to efficiently incorporate visual or auditory processing (as explored in search query 4: "Future of multimodal AI development roadmap"). Competitors are already making significant strides here (e.g., OpenAI’s Sora, Google’s Gemini). For xAI to be taken seriously as a long-term competitor, its new foundation must be *natively multimodal*.

This transition requires fundamental changes to how data is encoded and processed, often meaning that retrofitting these features onto a text-only model is harder than starting fresh with an architecture designed for cross-domain understanding. This forces xAI to align its long-term vision with the consensus future roadmap of AI development.

Implications for Business and Society: Actionable Insights

Musk’s public admission of an architectural failure has significant practical implications for everyone leveraging or building upon AI technology.

1. For Businesses Integrating AI Tools

Insight: Beware of "Fast-Follower" Models. If a powerful company like xAI can build a foundational model "wrong," smaller businesses integrating AI tools must exercise extreme diligence. Relying solely on vendor claims about performance is risky. Businesses must probe partners about their model’s architectural lineage: Was it trained for generality, or optimized for a narrow, immediate purpose?

If you are building proprietary applications on top of an LLM, ensure your integration layer is decoupled enough so that a major architectural shift by the provider (like xAI’s reset) doesn't completely break your product overnight.

2. For Investors and Stakeholders

Insight: Valuation Must Account for Rebuild Risk. Investment theses in frontier AI must incorporate the probability of needing a major foundational pivot. A large initial investment might be necessary, but subsequent funding rounds should be contingent on demonstrating architectural robustness and alignment with emerging multimodal standards, not just user growth metrics.

3. For the Future of AI Safety and Alignment

Insight: Safety is Foundational, Not an Afterthought. A rebuilt model implies a chance to embed safety protocols deeper into the core logic. If the first build failed structurally, it likely meant alignment was bolted on later. The rebuild offers an opportunity to make safety inherent. This sets a crucial precedent: true long-term viability in AI hinges on integrating alignment from the first line of code, making the process less about patching and more about architectural integrity.

Conclusion: The Necessary Pause in the AI Race

Elon Musk’s admission about xAI is a powerful narrative moment. It validates the concerns of skeptics who argue that the pace of AI development has outstripped the industry's ability to build robust, sustainable systems. While the short-term effect might be a temporary slowdown for Grok, the long-term implication is positive: a more mature industry willing to acknowledge and correct deep structural errors.

The future of AI innovation will likely favor those who can balance the necessity of speed with the discipline of sound engineering. The rebuild at xAI signals that the next generation of winning models won't just be bigger; they will be architecturally superior, natively multimodal, and built upon foundations that have already proven they can withstand the scrutiny of the world's most ambitious AI race.